Fairness Opinions

Evaluating a Buyer’s Shares from the Seller’s Perspective – 2025 Update

Perhaps the IPO market is signaling that a notable improvement in the M&A market may occur after a so-so start in 2025. Financial services companies have been active, with notable IPOs such as Circle Internet Group (NYSE:CRCL) and Chime Financial (NASDAQ:CHYM). A pick-up in IPO activity historically has presaged a pick-up in M&A.

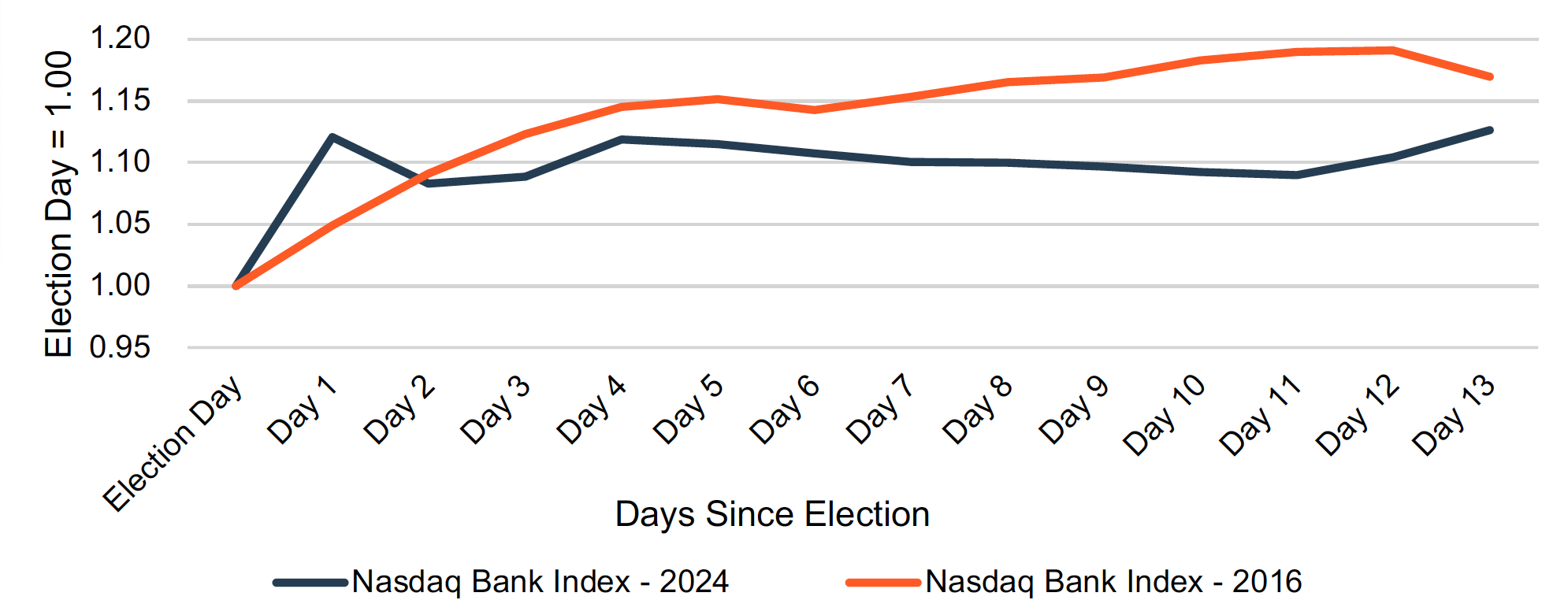

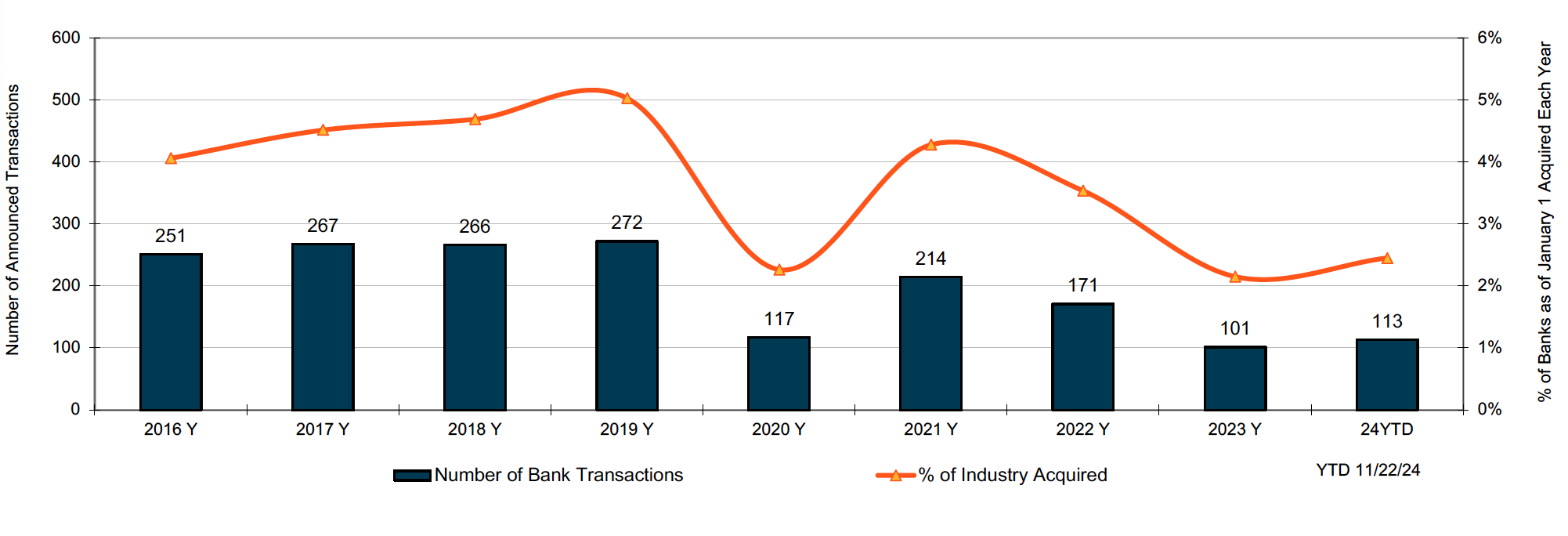

Bank M&A Deal Flow: 2025 Trends in Context

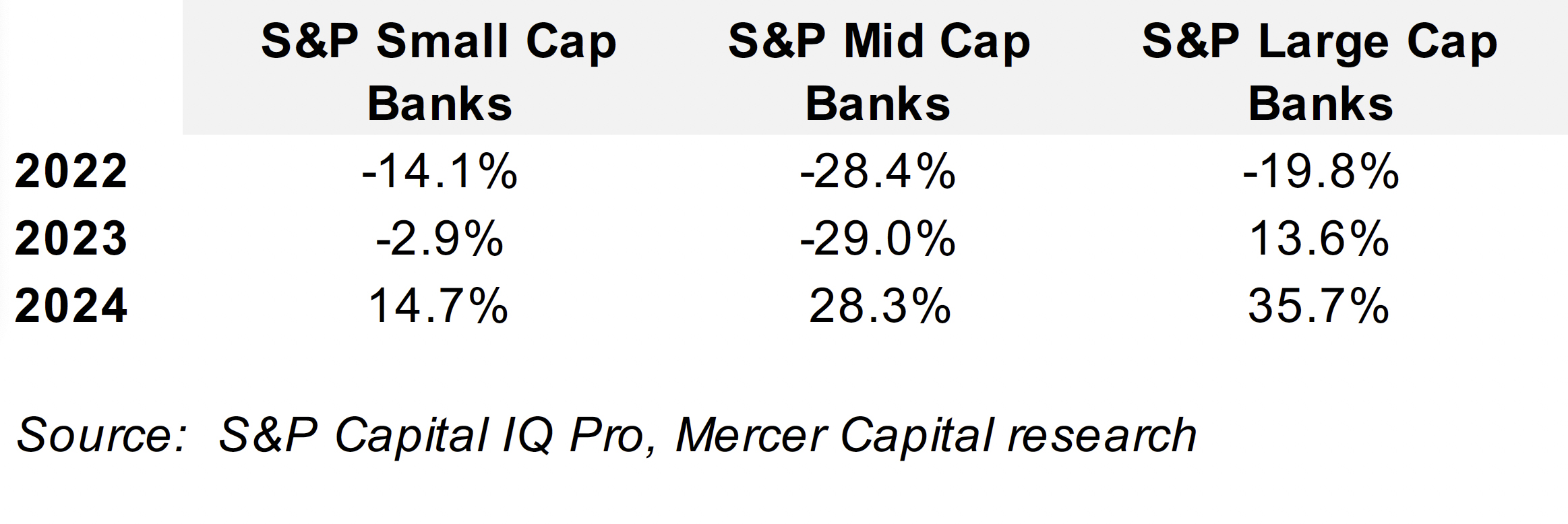

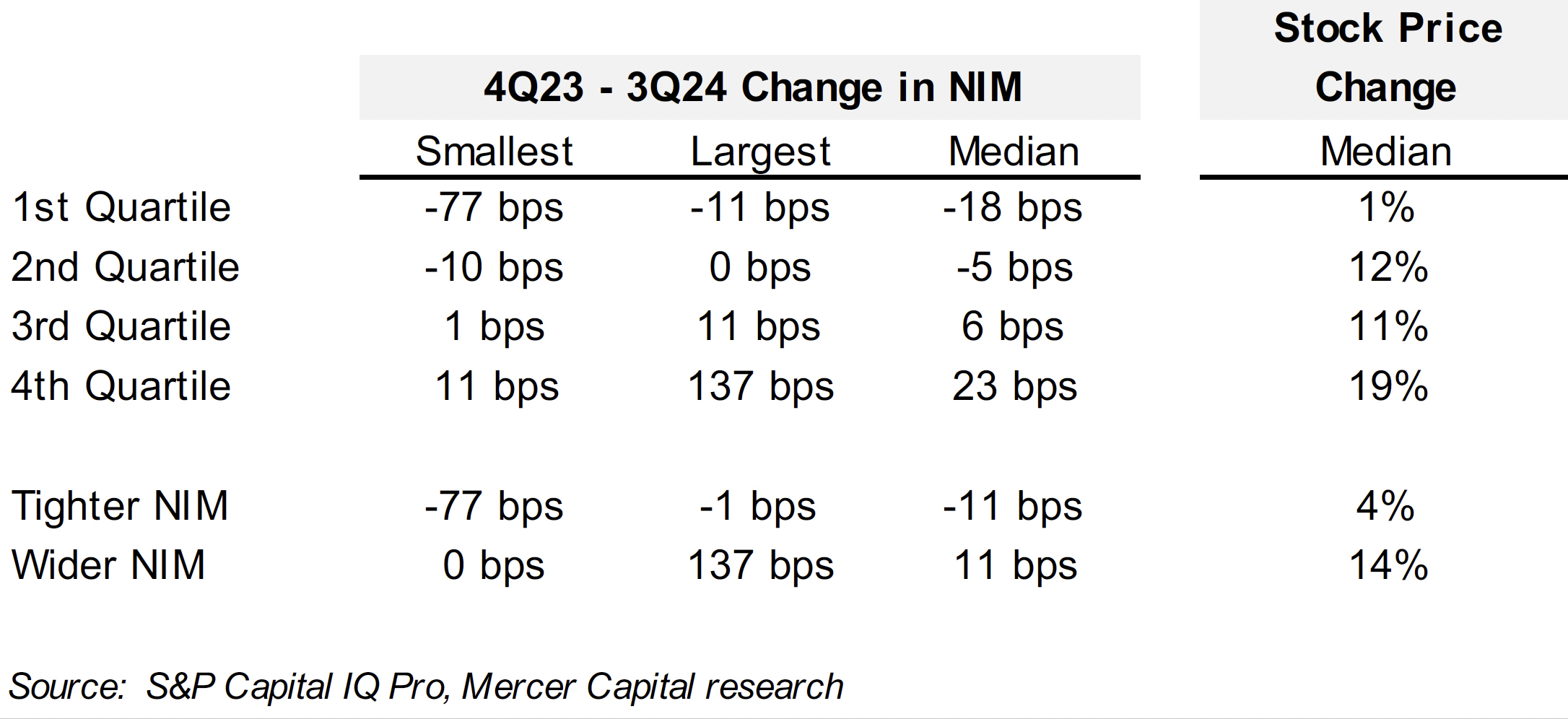

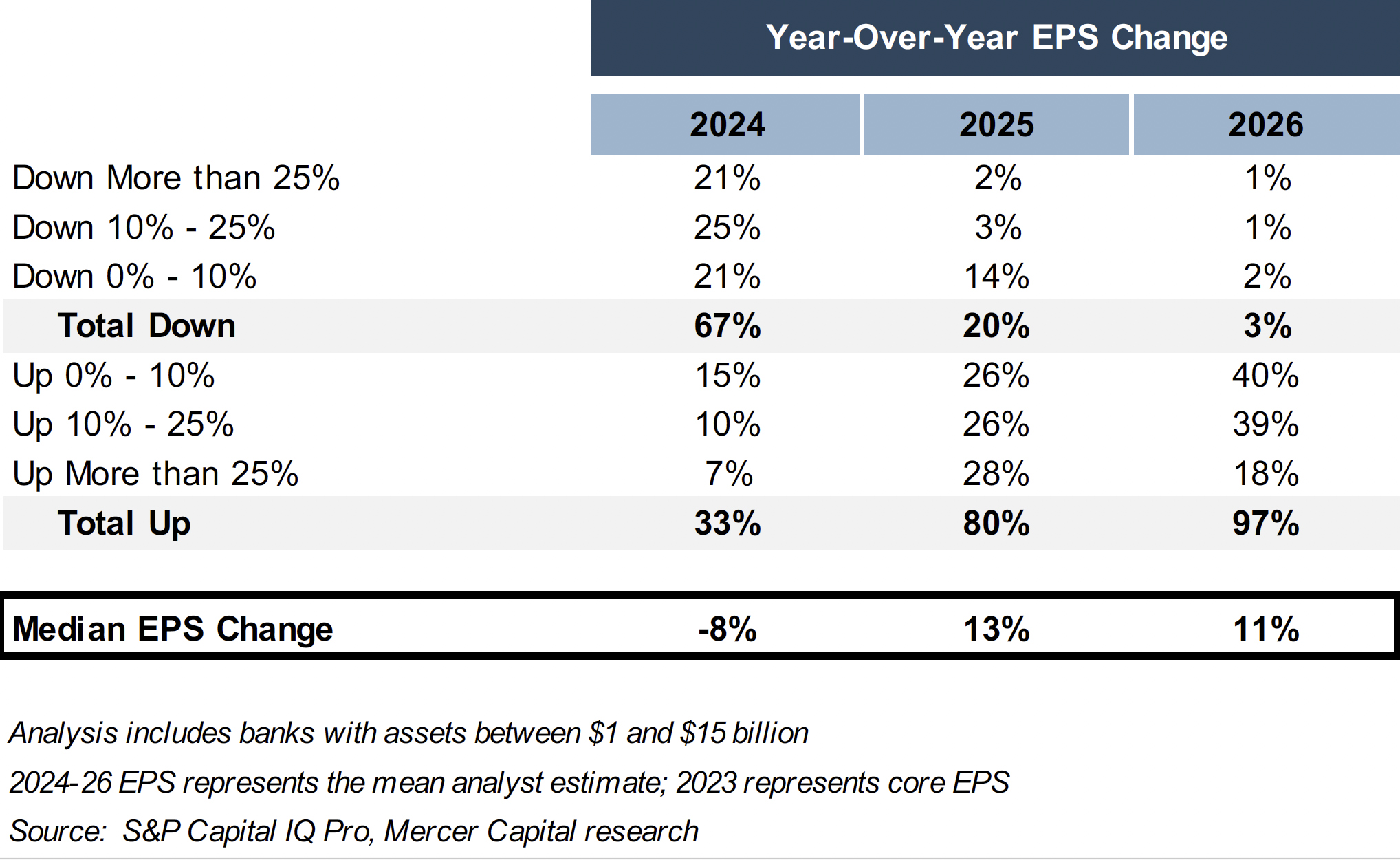

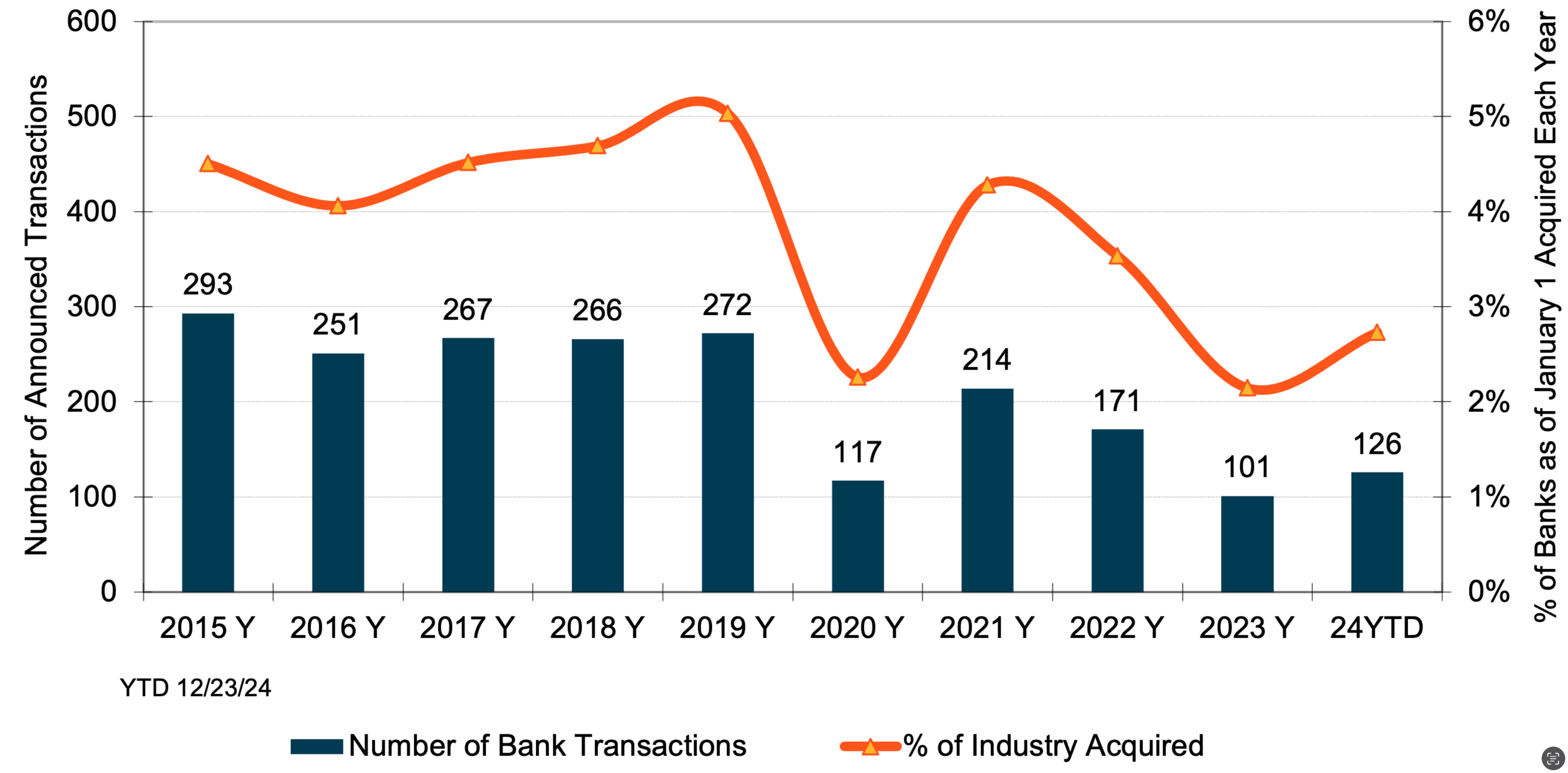

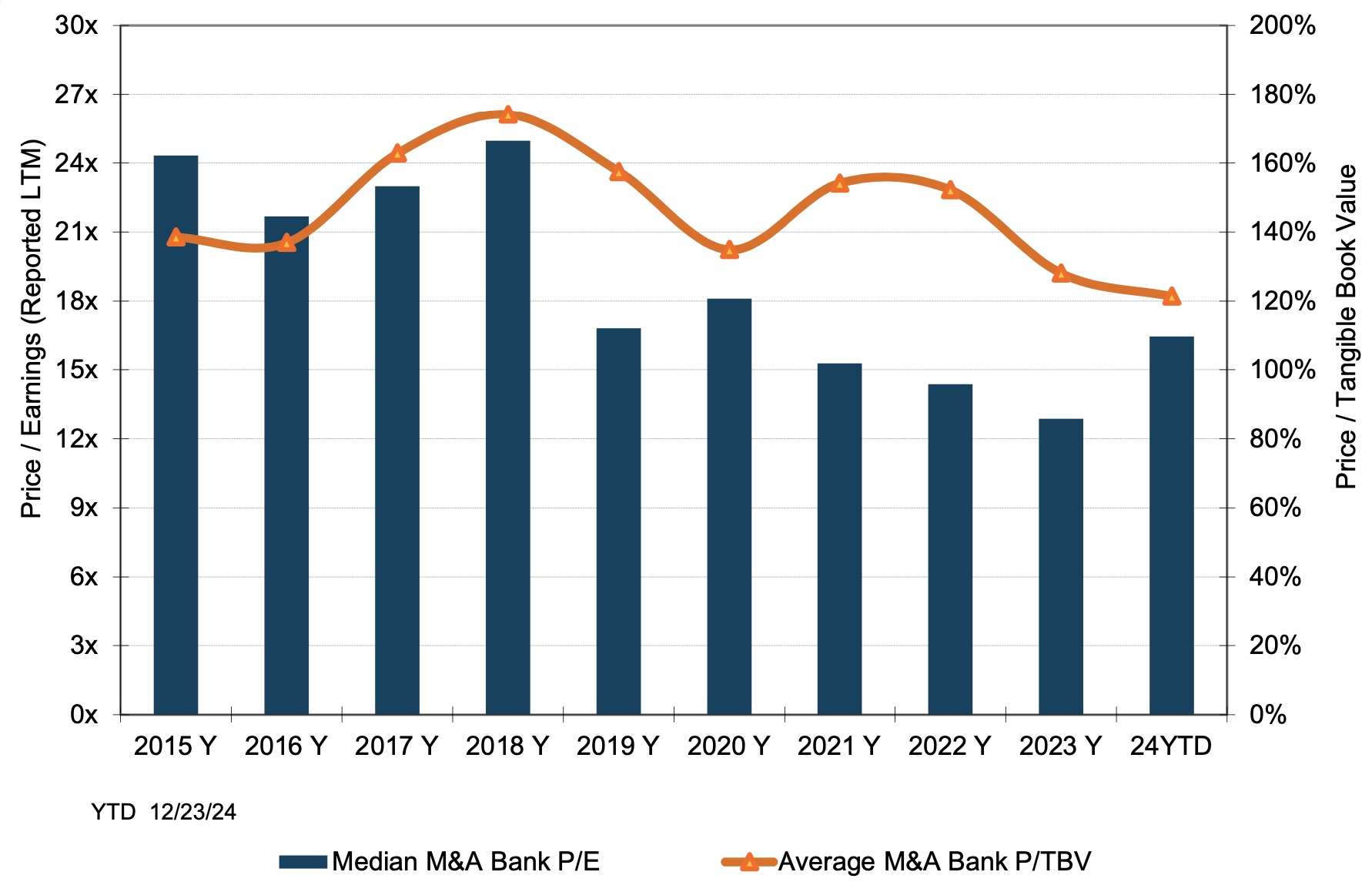

As of June 27, 2025, there have been 70 bank deals announced, which on an annualized basis if sustained would equate to about 3% of the 4,487 charters as of January 1. Over the past 35 years, typically 2% to 4% of the industry is acquired each year. The average P/TBV and P/E for the 26 deals with reported pricing was 146% and 17x.

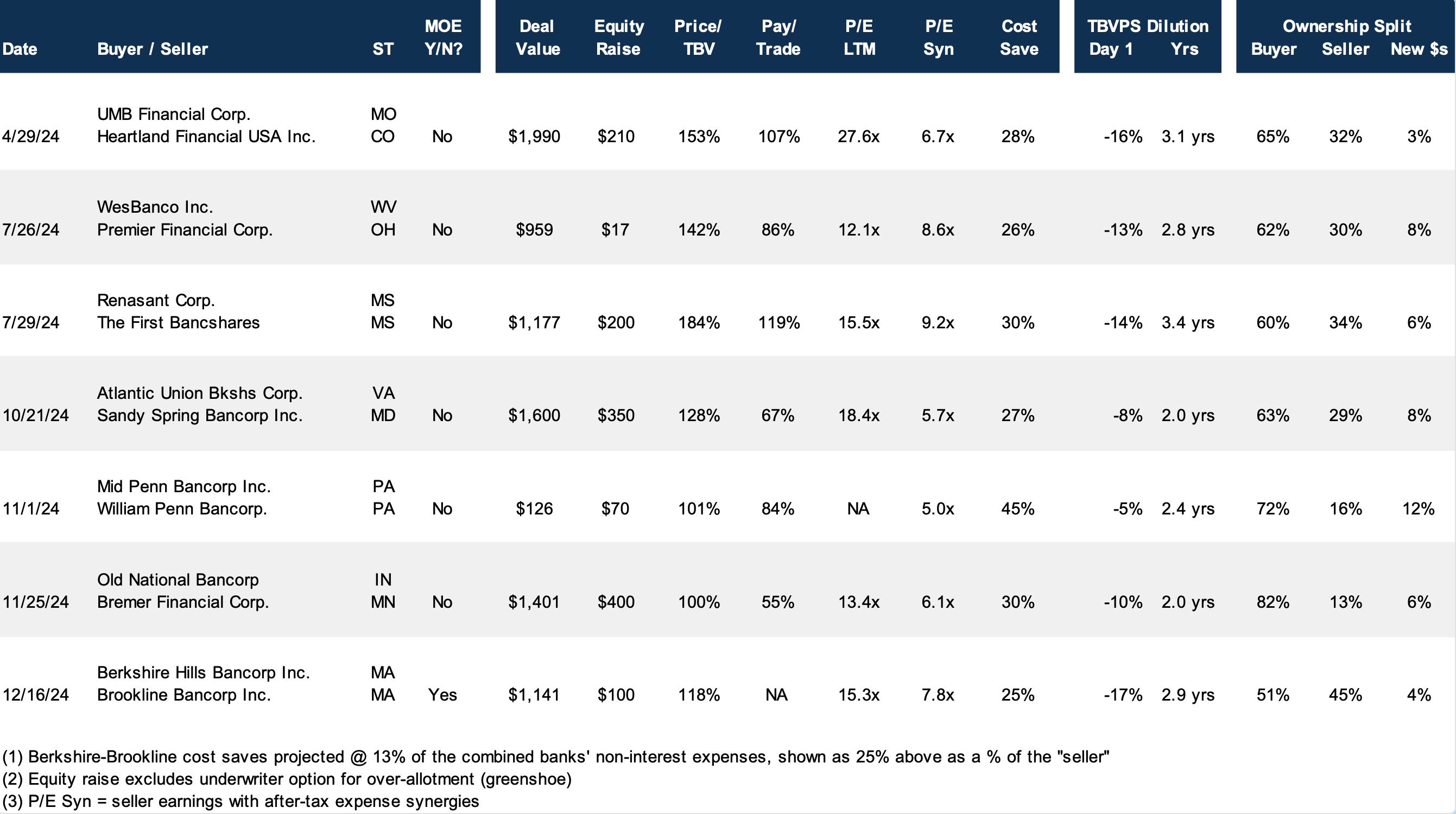

Excluding small transactions, the issuance of common shares by bank acquirers usually is the dominant form of consideration sellers receive. While buyers have some flexibility regarding the number of shares issued and the mix of stock and cash, buyers are limited in the amount of dilution in tangible book value per share (“TBVPS”) they are willing to accept and require visibility in EPS accretion over the next several years to recapture the dilution.

Because the number of shares will be relatively fixed, the value of a transaction and the multiple(s) the seller hopes to realize are a function of the buyer’s valuation. High multiple stocks represent strong acquisition currencies for acquisitive companies because fewer shares are issued to achieve a targeted dollar value.

It is important for sellers to keep in mind that negotiations with acquirers where the consideration will consist of the buyer’s common shares are about the exchange ratio rather than price, which is the product of the exchange ratio and buyer’s share price. A fairness opinion will address the fairness of the exchange ratio and consideration received by selling shareholders, not “price” per se in a stock swap transaction.

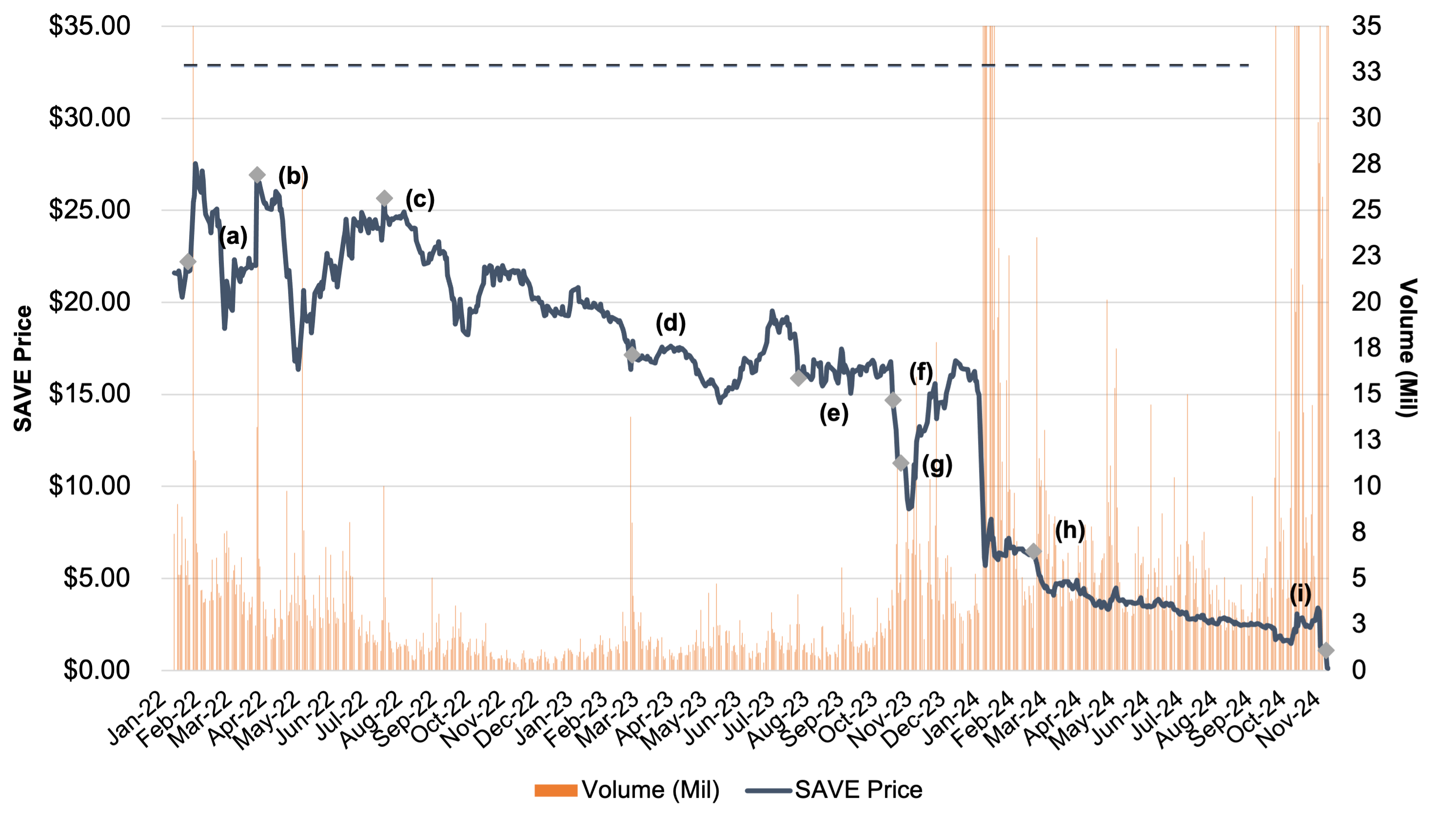

The Complexity of Evaluating Offers

Unlike cash deals, comparing and assessing fairness (and value) when stock swap offers are received requires a lot more deliberation by a board of directors and its advisor. One offer may entail a higher nominal price, but the acquirer’s shares may trade at a premium whereas a competing offer may equate to a lower price but the shares may entail less risk. Also, exchange ratios can be evaluated based upon the pro forma ownership of the acquirer post-closing compared with the contribution of operating income, core deposits and the like.

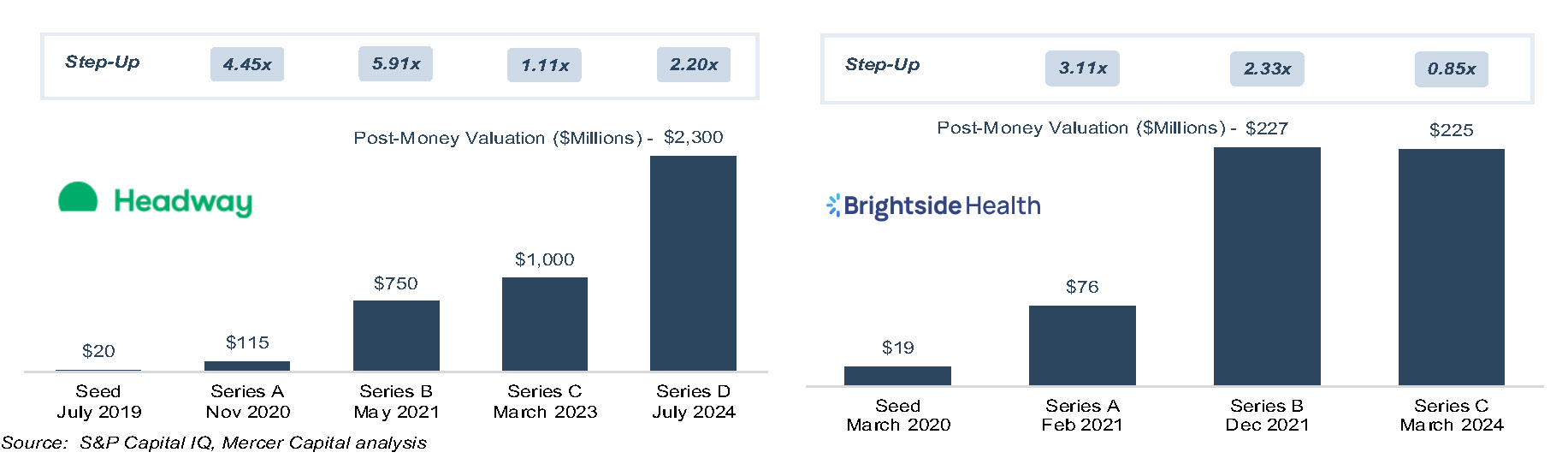

When sellers focus on price, it is easier all else equal for acquirers to ink a deal when their shares trade at a high multiple of TBVPS and EPS. However, high multiple stocks represent an under-appreciated risk to sellers who receive the shares as consideration. Accepting the buyer’s stock raises a number of questions, most of which fall into the genre of: what are the investment merits of the buyer’s shares? The answer may not be obvious even when the buyer’s shares are actively traded.

Board Oversight

Our experience is that some if not most members of a board weighing an acquisition proposal do not have the background to thoroughly evaluate the buyer’s shares. Even when financial advisors are involved, there still may not be a thorough vetting of the buyer’s shares because there is too much focus on “price” instead of, or in addition to, “value.”

The Role of Fairness Opinions in M&A

Fairness opinions seek to answer the question whether the proposed consideration is fair to a company’s shareholders from a financial point of view. The opinion should be backed by a robust analysis of all of the relevant factors considered in rendering the opinion, including an evaluation of the shares to be issued to the selling company’s shareholders. The intent is not to express an opinion about where the shares may trade in the future, but rather to evaluate the investment merits of the shares before and after a transaction is consummated.

Key Questions When Evaluating Acquirer Shares

As we have advised in the past, the key questions to ask about the buyer’s shares remain the same. They are:

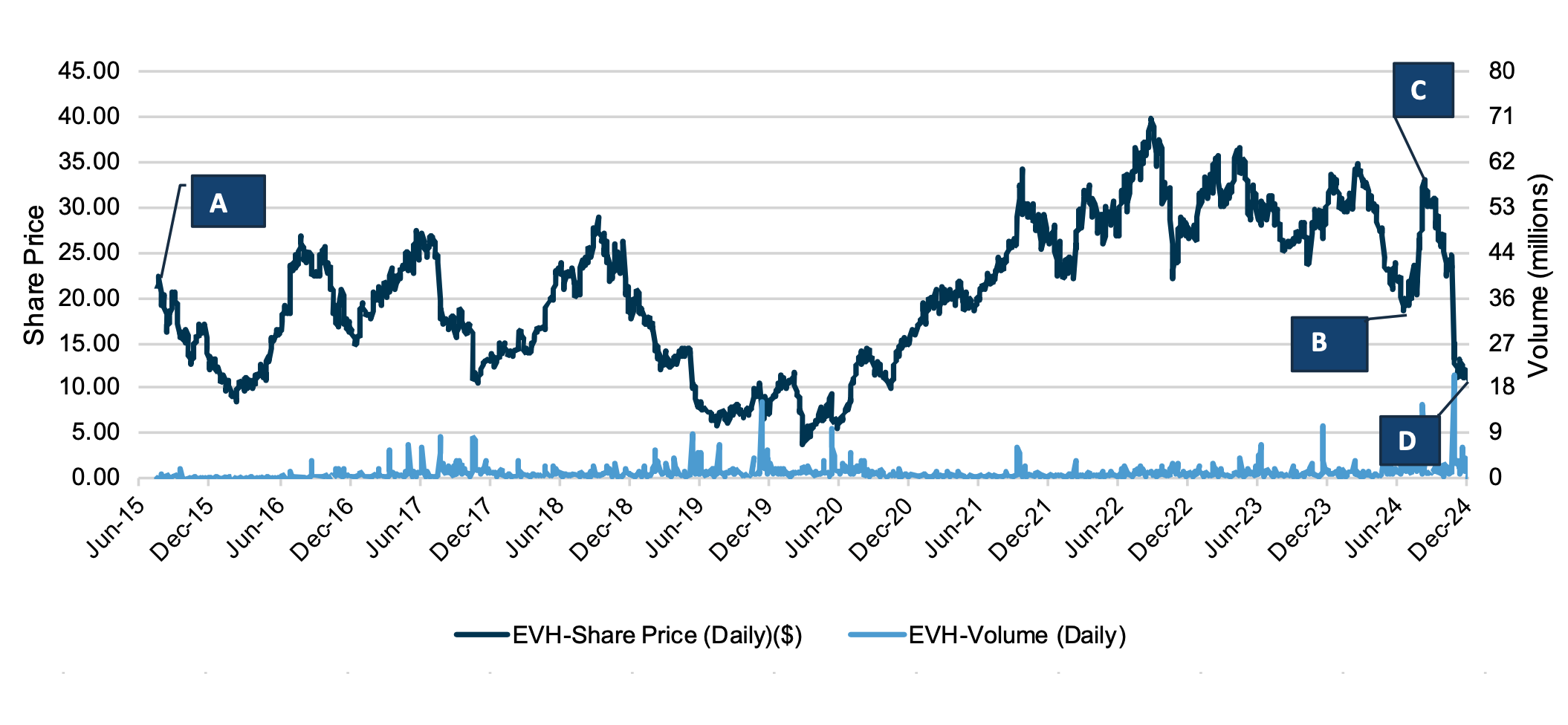

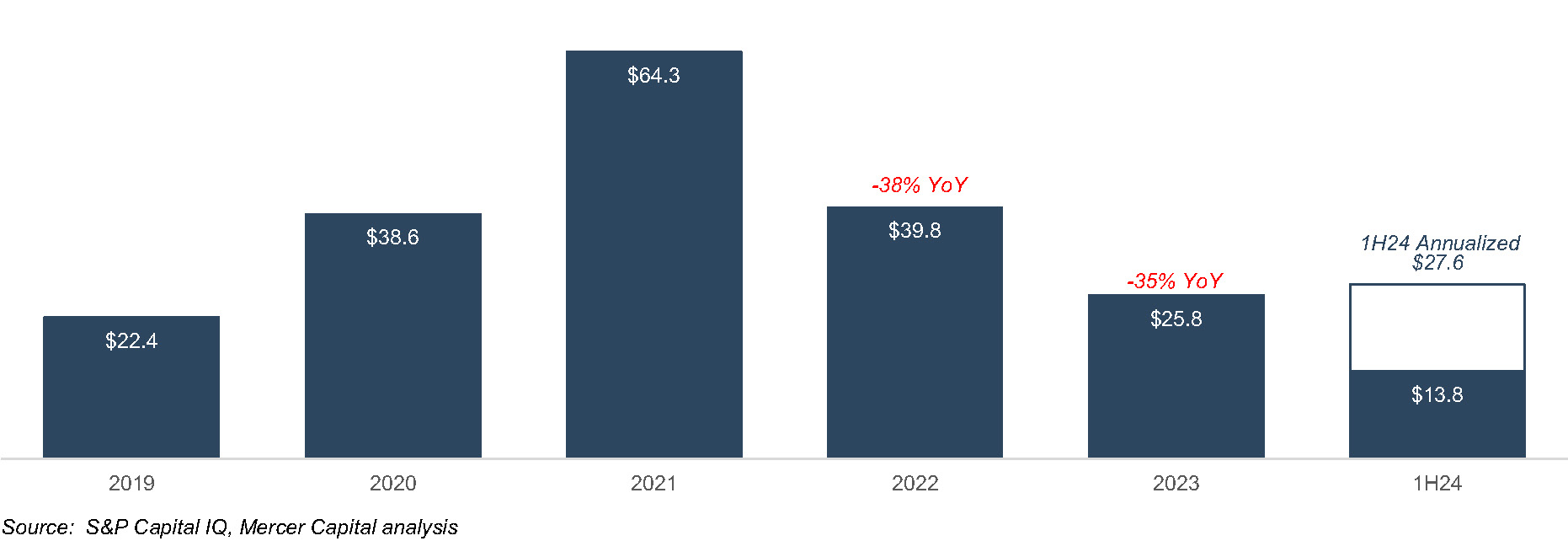

- Liquidity of the Shares. What is the capacity to sell the shares issued in the merger? SEC registration and NASADAQ and NYSE listings do not guarantee that large blocks can be liquidated efficiently. OTC listed shares should be scrutinized, especially if the acquirer is not an SEC registrant. Generally, the higher the institutional ownership, the better the liquidity.

- Profitability and Revenue Trends. The analysis should consider the buyer’s historical growth and projected growth in revenues, pretax pre-provision operating income and net income as well as various profitability ratios before and after consideration of credit costs. The quality of earnings and a comparison of core vs. reported earnings over a multi-year period should be evaluated.

- Pro Forma Impact. The analysis should consider the impact of a proposed transaction on the pro forma balance sheet, income statement and capital ratios in addition to dilution or accretion in earnings per share and tangible book value per share both from the seller’s and buyer’s perspective.

- Tangible BVPS Earn-Back. The projected earn-back period in day-one dilution to TBVPS is an important consideration for the buyer. In the aftermath of the GFC, an acceptable earn back period was on the order of three to five years; today, two to three years as institutional shareholders have indirectly tightened the pricing box for M&A by demanding that publicly traded buyers limit TBVPS dilution to short payback periods.

- Dividends. Sellers should not be overly swayed by the pick-up in dividends from swapping into the buyer’s shares; however, multiple studies have demonstrated that a sizable portion of an investor’s return comes from dividends over long periods of time. Sellers should examine the sustainability of current dividends and the prospect for increases (or decreases). Also, if the dividend yield is notably above the peer average, the seller should ask why? Is it payout related, or are the shares depressed?

- Capital and the Parent Capital Stack. Sellers should have a full understanding of the buyer’s pro-forma regulatory capital ratios both at the bank-level and on a consolidated basis (for large bank holding companies). Separately, parent company capital stacks often are overlooked because of the emphasis placed on capital ratios and the combined bank-parent financial statements. Sellers should have a complete understanding of a parent company’s capital structure and the amount of bank earnings that must be paid to the parent company for debt service and shareholder dividends.

- Loan Portfolio Concentrations. Sellers should understand concentrations in the buyer’s loan portfolio, outsized hold positions, and review the source of historical and expected losses.

- Ability to Raise Cash to Close. What is the source of funds for the buyer to fund the cash portion of consideration? If the buyer has to go to market to issue equity and/or debt, what is the contingency plan if unfavorable market conditions preclude floating an issue?

- Consensus Analyst Estimates. If the buyer is publicly traded and has analyst coverage, consideration should be given to Street expectations vs. what the diligence process determines. If Street expectations are too high, then the shares may be vulnerable once investors reassess their earnings and growth expectations.

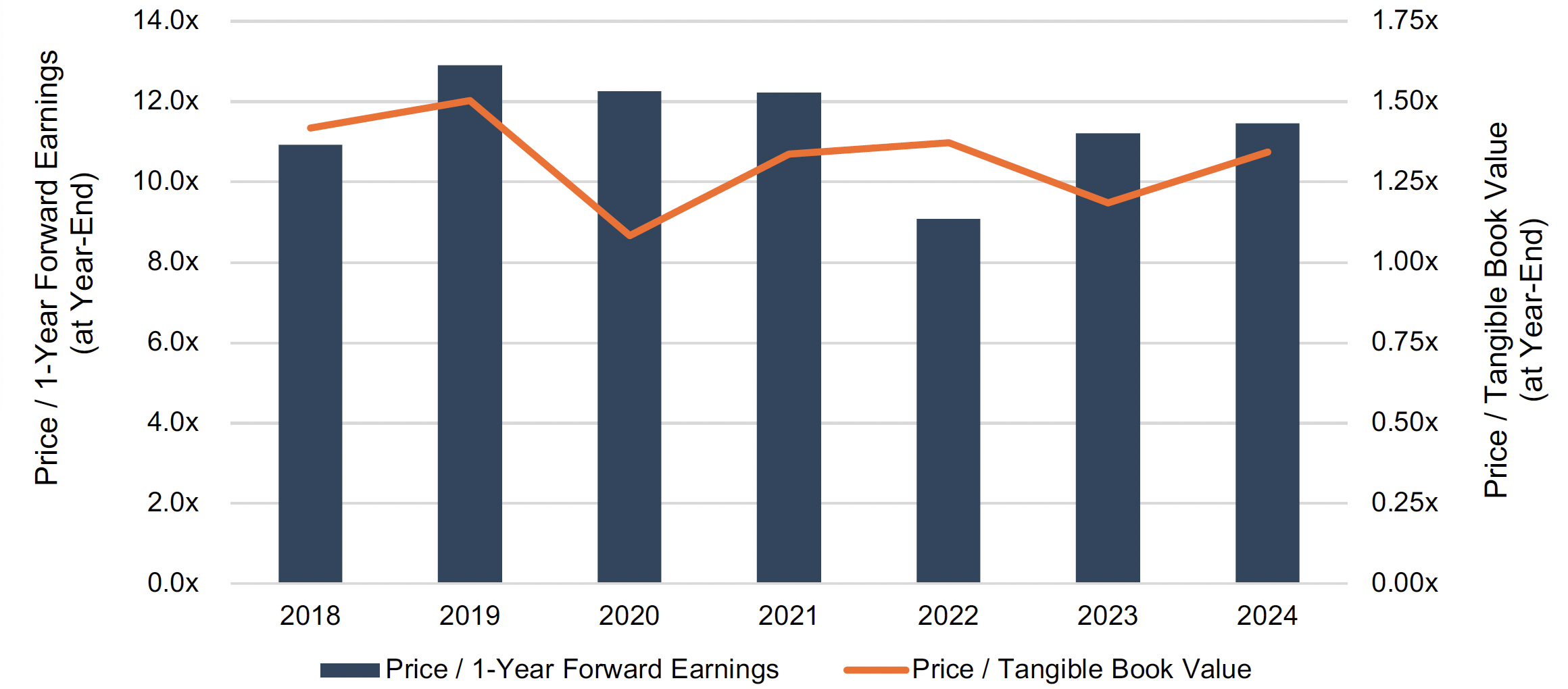

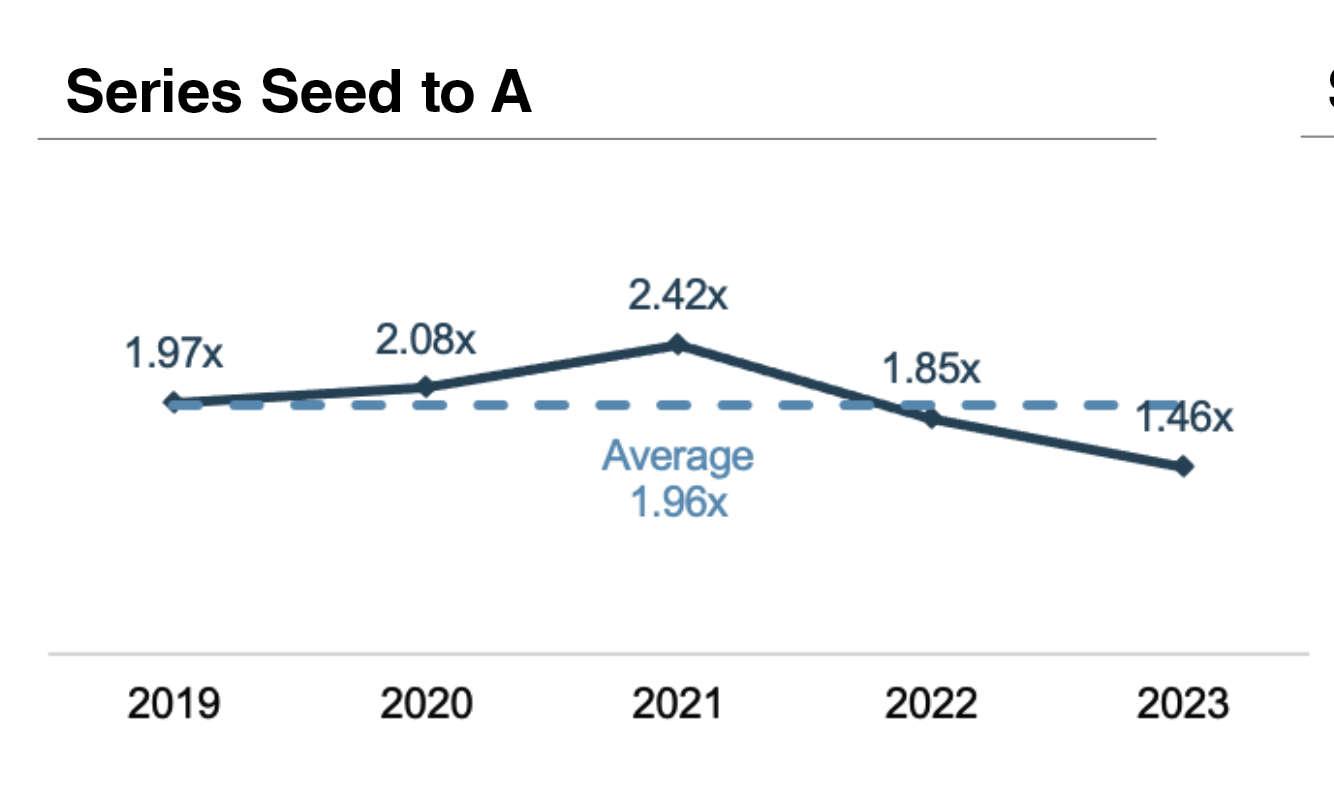

- Valuation. Like profitability, valuation of the buyer’s shares should be judged relative to its history and a peer group presently and relative to a peer group through time to examine how investors’ views of the shares may have evolved through market and profit cycles. Valuation is rarely a catalyst for a stock, but both absolute and relative valuation speak to one aspect of investment risk.

- Share Performance. Sellers should understand the source of the buyer’s shares performance over several multi-year holding periods with returns disaggregated into three components: EPS growth, multiple expansion or contraction, and the impact of reinvested dividends. If the shares have significantly outperformed an index over a given holding period, is it because earnings growth accelerated? Or is it because the shares were depressed at the beginning of the measurement period? Likewise, underperformance may signal disappointing earnings, or it may reflect a starting point valuation that was unusually high.

- Strategic Position. Assuming an acquisition is material for the buyer, directors of the selling board should consider the strategic position of the buyer, asking such questions about the attractiveness of the pro forma company to other acquirers.

- Contingent Liabilities. Contingent liabilities are a standard item on the due diligence punch list for a buyer. Sellers should evaluate contingent liabilities too.

Conclusion: Value, Risk, and the Post-Deal Reality

The list does not encompass every question that should be asked as part of the fairness analysis, but it does illustrate that a liquid market for a buyer’s shares does not necessarily answer questions about value, growth potential and risk profile. When one surveys the M&A history of banks, there is no shortage of sellers who were waylaid by the performance of the buyer’s shares after the deal closed.

We at Mercer Capital have extensive experience in valuing and evaluating the shares (and debt) of financial and non-financial service companies garnered from over four decades of business.

Potential Intersection of Estate Planning During the Divorce Process

Divorce is often an emotionally and financially draining process, and estate planning may be the last thing on the minds of the divorcing parties. However, reviewing estate plans during a divorce can be a step in preparing for the future. Like many aspects of divorce, timing matters and there are both advantages and drawbacks to updating your estate documents while the divorce is still pending.

The Pros of Estate Planning During Divorce

Divorce proceedings can take months or even years. During that time, the current spouse may still have legal authority over health care decisions, financial matters, and inheritances under a preexisting estate plan. Updating documents such as power of attorney, health care proxy, and beneficiary/trustee/guardian designations (if legally allowable as discussed later) can ensure that soon-to-be exes do not have unwanted control over affairs in the event of incapacitation or death before the divorce is finalized. There can also be a form of “reverse” estate planning, such as placing restrictions on certain persons as beneficiaries under a trust.

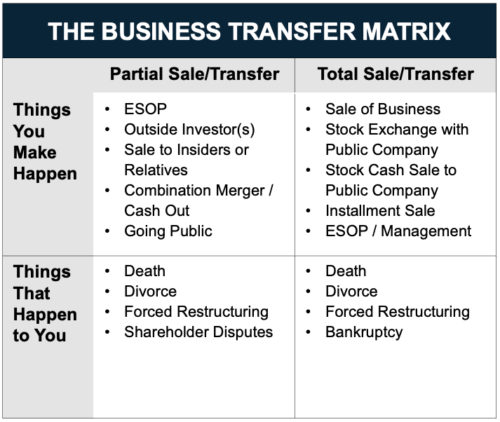

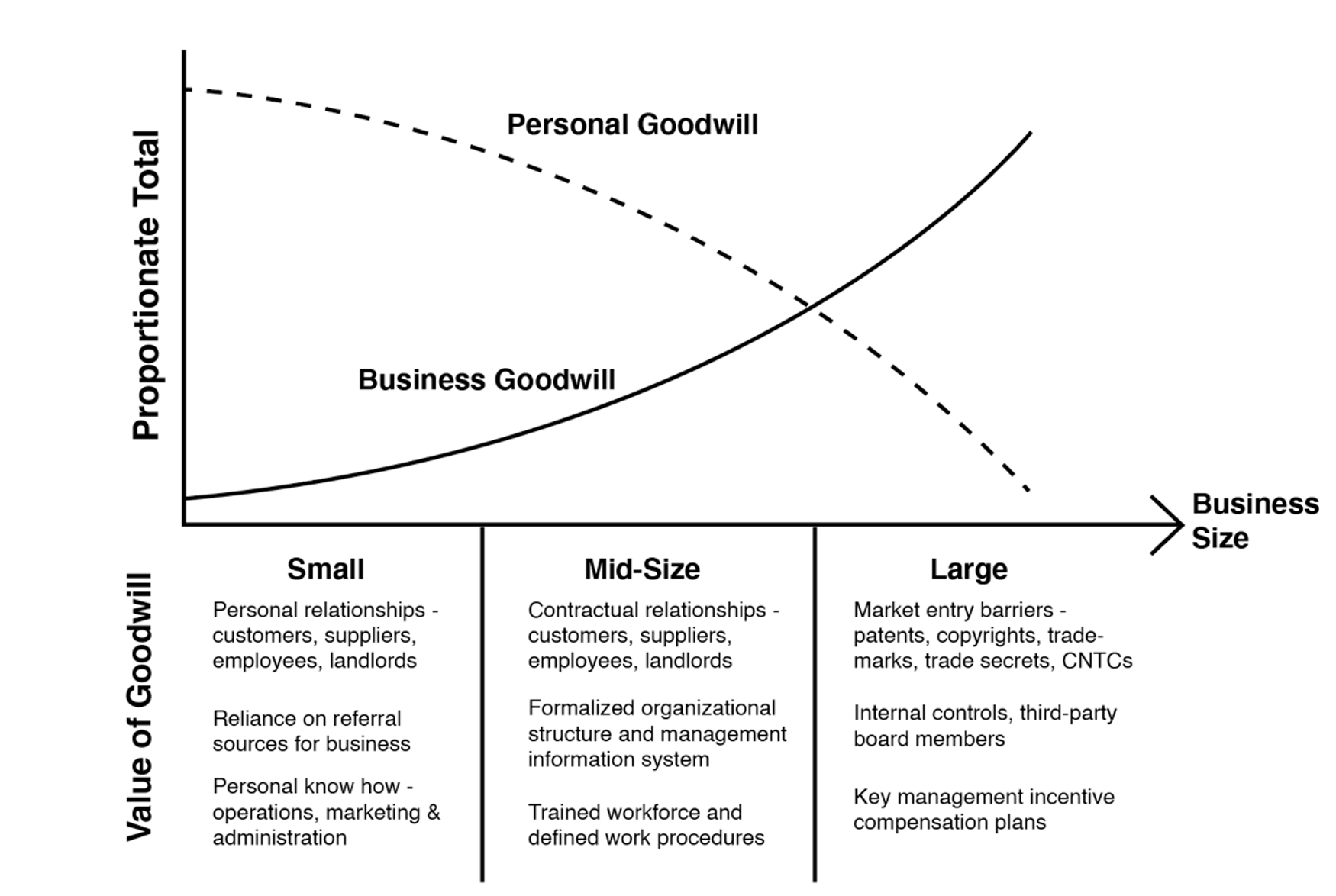

While everyone wants to know the value of their privately held business, it can be costly, which leads many business owners to go years without a valuation. As seen in our graphic “The Business Transfer Matrix”, death and divorce are two events that lead to the valuation process.

Revising an estate plan contemporaneous to on-going divorce proceedings may lead to efficiencies for divorcing and gifting parties. When a couple owns a business or multiple business interests, the expert fees associated with valuing the businesses can pile up quickly, particularly when there are competing experts, and two sets of attorneys preparing for a contentious trial.

Contrast this to the parties agreeing on one expert to value a business interest which is then transferred for the benefit of the divorcing couple’s children. While this approach may result in lower costs, it may also result in a significant benefit of reduced acrimony in settlement discussions. The in-spouse (the one who stays involved with the business post-divorce) does not want to overpay their spouse just as the out-spouse wants to receive as much in equitable distribution. By transferring the economic interest (perhaps even structuring such that one of the divorcing parties maintains operational control of the business), this can “take valuation off the table” because the business interest would no longer be part of the marital estate in addition to the many benefits of estate planning absent a divorce proceeding.

Transferring the business interest out of the marital estate may also become a last resort if the two valuation experts have irreconcilable valuations for the business. There is the added benefit of potential tax benefits available to married couples such as gift-splitting that will no longer be available post-divorce. While estate planning during divorce is not a one-size-fits-all approach, it can be a creative way to get a case settled and save money in the long-run.

The Cons of Estate Planning During Divorce

Some states impose “automatic temporary restraining orders” (ATROs) during divorce proceedings, which can limit the ability to change beneficiary designations or transfer assets. These rules are designed to prevent one spouse from hiding or dissipating marital property but may also restrict legitimate estate planning modifications. This is an appropriate safeguard and further highlights the need for cooperation to be able to embark upon estate planning during a divorce – something that is frequently not observed. For estate planning to realize optimal outcomes in a divorce process, a proper team should be assembled on the front end of a divorce process, as both parties are well-served to preserve end-goals such as tax planning, rather than have the dispute fall into a process of manipulation for personal-benefit reasons in the divorce process.

Until the divorce is finalized, the parties’ individual financial picture is in flux. We typically assist with the determination of asset values (like a business), equitable distribution, alimony, and child support obligations, that can make it hard to plan accurately for future asset composition. Premature changes to an estate plan might also conflict with the eventual divorce settlement or require costly revisions in the future, particularly if both parties initially agree, then one or both back out of the estate planning process.

One financial concept to think about is “core” and “surplus” capital, a framework that frequently arises in our discussions with wealth advisors. During an estate planning process, it’s important to know how much you can comfortably transfer to future generations or charitable causes. While reducing taxes and increasing proceeds for future generations is an admirable goal, this should not come at the expense of financial uncertainty. Stated plainly, advice we’ve heard is “don’t give away more than you can afford to realize your personal financial objectives”.

The divorce process already introduces a significant amount of uncertainty, going from one family budget to two households, among a host of other changes. Estate planning requires clear thinking and careful consideration — two things that may be in short supply during a divorce. For some, adding another layer of legal work may overcomplicate the process and lead to unwanted delays. This complexity reminds us of estate planning some business owners do in advance of selling their business.

To accomplish successful estate planning during divorce, respective legal counsel may collaborate on certain issues. While some family law groups are part of larger law firms, many others focus exclusively on family law. Firms that possess capabilities in both estate planning and family law may have a built-in advantage to realize a mutually acceptable estate plan during a divorce, but it ultimately comes down to communication amongst the attorneys, and being part of the same firm is not a prerequisite for good communication. Even firms that offer both legal disciplines may not represent the most efficient course of action if an estate plan was already drafted by another firm as it may be easiest to have the initial drafting firm update the documents based on discussions with the family law attorneys.

From a valuation perspective, a firm that is well versed in both family law and estate planning is best positioned to navigate the labyrinth of issues that lead to equitable outcomes. Mercer Capital’s senior professionals are members of the American Academy of Matrimonial Lawyers (AAML) Foundation Forensic & Business Valuation Division and are also regularly engaged by the nationally prominent estate planning attorneys. Our extensive valuation work for both family law and estate planning matters keeps us well informed of material developments in each field and how they may affect valuation and planning strategies.

Conclusion

In short, while estate planning during divorce adds complexity, in the right circumstances it can also be a vital resource — one worth considering depending on the size and composition of the marital estate as well as the cooperation opportunities amongst the divorcing parties. In all scenarios, seasoned legal counsel is advised. Combined with experienced valuation assistance, consideration of estate planning techniques in the midst of a divorce may result in a novel solution to the inherently complex and contentious divorce process.

Takeaways from the Pierce Case: The Importance of Relevant Data and Reasoned Analysis

The 2025 U.S. Tax Court opinion in Kaleb J. Pierce v. Commissioner of Internal Revenue (T.C. Memo 2025-29) offers insight on several issues that regularly feature in the valuations of privately held business interests. By presenting an issue-by-issue analysis, the Pierce decision reinforces an important message for appraisers and estate planners: relevant data and reasoned analysis carry the day in court.

Background

The subject company, Mothers Lounge, LLC, an S corporation for tax purposes, sold mother and baby products. The company sold cheaply manufactured goods directly to consumers. The business relied on a “free, just pay shipping” no returns model, which afforded it a high profit margin, but came with a plethora of unsavory business practices, including copying competitor products, over-charging customers for shipping, undermining wholesalers and marketing affiliates, and suppressing customer reviews.

The business history and practices detailed in the Findings of Fact are sufficient to raise eyebrows in a room full of former FTX executives. The dubious business model invited frequent litigation, with most lawsuits filed for trademark infringement. Two lawsuits were specifically described, one of which was for patent infringement and illegal marketing practices that had “ballooned into an existential threat.” Adding to these murky undercurrents were an affair of one of the business principals and a blackmail demand letter that spurred an FBI investigation. As noted by the Tax Court, these developments had “caused extreme dysfunction with the company’s management and demoralized the workforce” in the timeframe before the valuation date.

The company’s business practices may have raised eyebrows, but they were lucrative. The first successful product, a nursing cover, illustrates the model:

Despite giving the product to customers for “free,” Mothers Lounge, LLC earned a healthy 64% contribution margin on each unit sold, which was more than sufficient to cover all other operating expenses of the business. In 2013, the company had an EBITDA (earnings before interest, taxes, depreciation and amortization) margin of 29%, which many readers will recognize as above average for a consumer products business. The company was debt-free and required minimal investments in depreciating assets, making EBITDA a good proxy for pre-tax cash flow.

The Pierce court had to decide the proper value for gift tax purposes of two minority interests in Mothers Lounge, LLC that were transferred in 2014 (a 29.4% interest and a 20.6% interest).

Expert Witnesses

The taxpayer’s expert prepared a valuation report submitted at trial. During the administrative appeal of the case in 2017, the taxpayer’s expert had also prepared a forecast for the business (the “2017 Forecast”). The taxpayer’s expert did not rely on the 2017 Forecast in his appraisal of the subject interests before the Tax Court, but the valuation expert for the IRS did. To recap, there were two valuation experts at trial, one for the taxpayer and one for the IRS. In preparing his appraisal, the IRS’ valuation expert relied on the 2017 Forecast prepared by the taxpayer’s expert, but the taxpayer’s expert did not rely on the 2017 Forecast in preparing his appraisal.

Key Issues

Forecast

While both experts agreed on the application of the income approach, they relied on different forecasts. The forecast prepared by the taxpayer’s expert for his appraisal report relied on an analysis and assessment of relevant factors and market trends “known or knowable” as of the valuation date, which the Court deemed credible. In contrast, the IRS’ valuation expert relied on the 2017 Forecast “without independent verification,” which the Court easily rejected.

The fact that the taxpayer’s expert prepared the forecasts underlying both his own report and that of the IRS’ valuation expert is a unique feature of the case. While the Pierce court deemed the forecast used by the taxpayer’s expert credible, it declined to ascribe weight to the 2017 Forecast used by the IRS’ valuation expert (which was prepared by the taxpayer’s expert). According to the opinion, the taxpayer’s expert “was in a time crunch” to prepare the 2017 Forecast and he ultimately relied on post-valuation data to support its projections. The Court noted that the 2017 Forecast lacked any analysis or discussion of the events surrounding the FBI investigation and inappropriately relied on post-valuation data. The Court pointedly stated that “this reliance blurs the line between information that was known or knowable as of the valuation date and the information that was not reasonably foreseeable as of the valuation date.”

Tax Affecting

Both experts agreed that tax affecting the earnings of the company (an S corporation) was appropriate and used the Delaware Chancery method to calculate substantially equivalent tax rates (26.2% and 25.8%).

The Court commented that tax affecting earnings of tax pass-through entities can be rejected where “a party fails to adequately explain” its necessity or where the experts “have not accounted for the benefits of S corporation status to shareholders.” We note that the 2017 Tax Cuts and Jobs Act brought C and S corporations closer to parity in taxation, diminishing the additional economic benefits formerly realized by owners of pass-through entities. Nonetheless, the Pierce opinion affirms that the valuation of an interest in a tax pass-through entity should account for the effect, if any, of tax status on the value of the interest.

Discount Rate

Mothers Lounge, LLC had no debt and both experts developed a cost of equity capital (COEC) discount rate using the build-up method. The key differences between the experts were in the presentation of the underlying data and the application of a company-specific risk premium (CSRP).

The taxpayer’s expert used the Kroll Cost of Capital Navigator platform, which includes tables with output results, but does not present the underlying data. In contrast, the IRS’ valuation expert “provided a thorough review of his process and the academic papers that supported his equations.” Citing the lack of supporting data in the taxpayer’s expert report, the Court accepted the COEC rate concluded by the IRS’ valuation expert.

Of particular interest is the issue of company-specific risk premium. The taxpayer’s expert added a CSRP of 5% to the build-up analysis, while the IRS’ valuation expert applied a 0% premium. In discussing the company-specific risk premium, the Court acknowledged that the build-up method allows for the consideration of such risks, but expressed concern that such risk factors may already be accounted for in other elements of the build-up approach (such as the size premium). Ultimately, the Court did not accept the premium applied by the taxpayer’s expert, who had cited five risk factors he considered in arriving at his conclusion for the premium. The Court chided the taxpayer’s expert for failing to provide sufficient details to allow the Court to understand the derivation of the selected premium. The Court’s conclusion confirms the need to support the application of a company-specific risk premiums with reference to available market evidence and the overall reasonableness of the resulting conclusion of value.

Applicable Discounts

Both experts applied discounts for lack of control and lack of marketability in the valuation of the subject minority interests.

- With respect to the discount for lack of control, the experts differed in the approach used to determine the discount and its application. The Court adopted the taxpayer’s expert 5% discount which was based on analysis of the company’s operating agreement, capital market evidence, and consideration of relevant facts and circumstances. In contrast, the IRS’ valuation expert applied a 10% discount, but only to the non-operating assets of the business. In its rejection of the latter approach, the Court once again cited the lack of underlying supporting data and analysis.

- The experts applied similar (25% and 30%) discounts for lack of marketability supported by detailed explanations of their methodologies and conclusions. The Court found the methodology used by the taxpayer’s expert to be “slightly more persuasive.” The Court once more expressed concern that the IRS valuation expert relied on the 2017 Forecast. Of note, the Court’s finding in favor of the (lower) marketability discount proffered by taxpayer’s expert was actually adverse to the taxpayer’s overall position.

Conclusion

The material valuation issues in the Pierce case include the proper data to use in preparing a forecast, tax affecting pass-through earnings, and supporting appropriate risk factors and discounts to be applied in the valuation of closely held business interests.

The Court’s consideration of each issue underscores the importance of marshalling relevant data and presenting reasoned analysis in valuation reports.

All in the Family: X Corp. Merges with x.AI Corp.

Elon Musk Continues to Explore Corporate Governance and Fairness Boundaries

This presentation explores the fairness and corporate governance issues surrounding the March 2025 merger of x.AI Corporation and X Corporation, both controlled by Elon Musk. With a combined enterprise value of $125 billion, the stock-for-stock transaction raises questions about valuation methodology, board independence, conflicts of interest, and procedural rigor. Drawing comparisons to Musk’s past deals—such as Tesla’s acquisition of SolarCity and the leveraged buyout of Twitter—the analysis examines whether this latest “all in the family” merger meets the standards of fairness typically expected in transactions involving controlling shareholders.

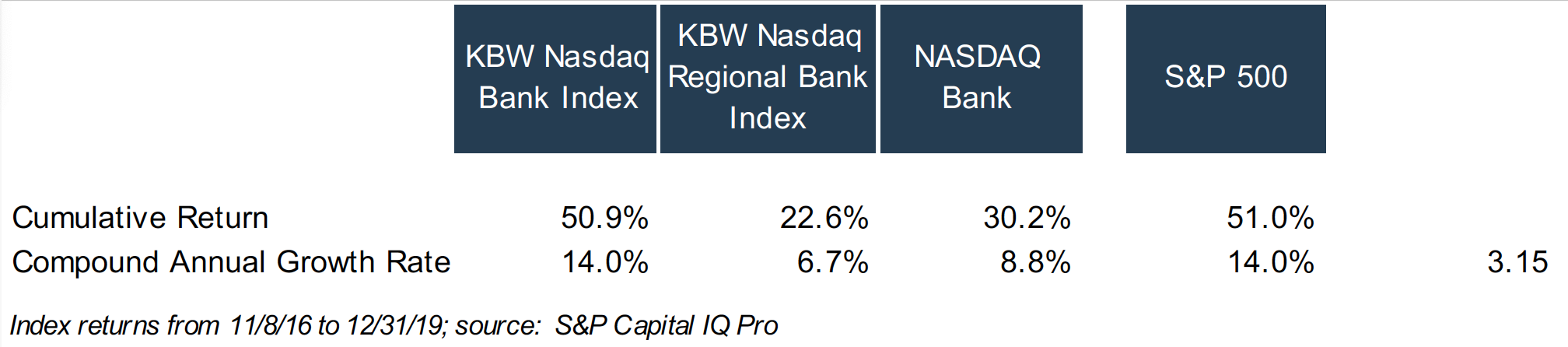

Dividends and Shareholder Returns – A Ten-Year Lookback

Morningstar recently reported, given volatility in the equity markets, that U.S. equity funds and ETFs took in a relatively modest $5.7 billion in March 2025, of which $5.6 billion flowed into dividend growth funds. This investor preference for dividends caused us to reevaluate the role of dividends in bank shareholder returns.

An investor’s total return is a function of three variables:

- The stock price change over the holding period

- The cumulative dividends received

- The return on reinvested dividends. The market convention is that a dividend payment is reinvested in the issuer’s common stock. If the issuer’s common stock price increases after receipt of the dividend, this appreciation in value of the reinvested dividend will enhance the shareholder’s total return.

To simplify this analysis, we do not consider taxes.

A Middling Decade

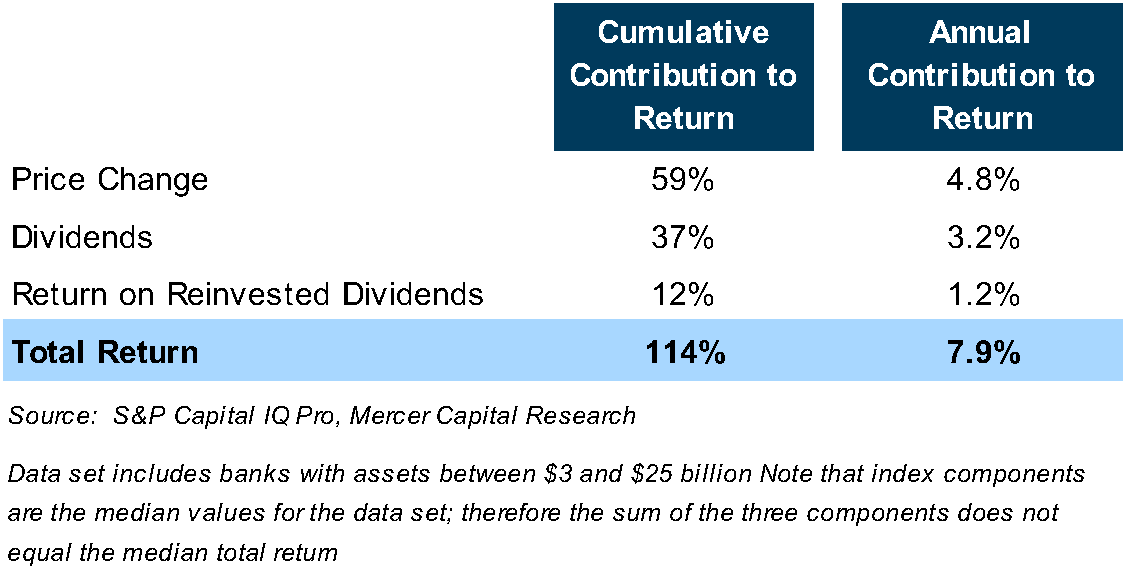

In a period marked by moderate share price appreciation, dividends become a crucial component of shareholders’ total returns. As indicated in Table 1 below, the group of publicly traded banks generated a median total return of 114% over the 2014 to 2024 period, or a 7.9% annualized return. For the median bank, stock price appreciation was 59%, implying that the remainder of the median bank’s 114% total return came from dividends and the return on reinvested dividends.

Returns were rather pedestrian for several reasons.

- The median compound annual growth rate in earnings per share and tangible book value per share, excluding accumulated other comprehensive income, was about 7% over the 2014 to 2024 period. The impact of higher interest rates continues to weigh on banks’ earnings and, therefore, book value growth.

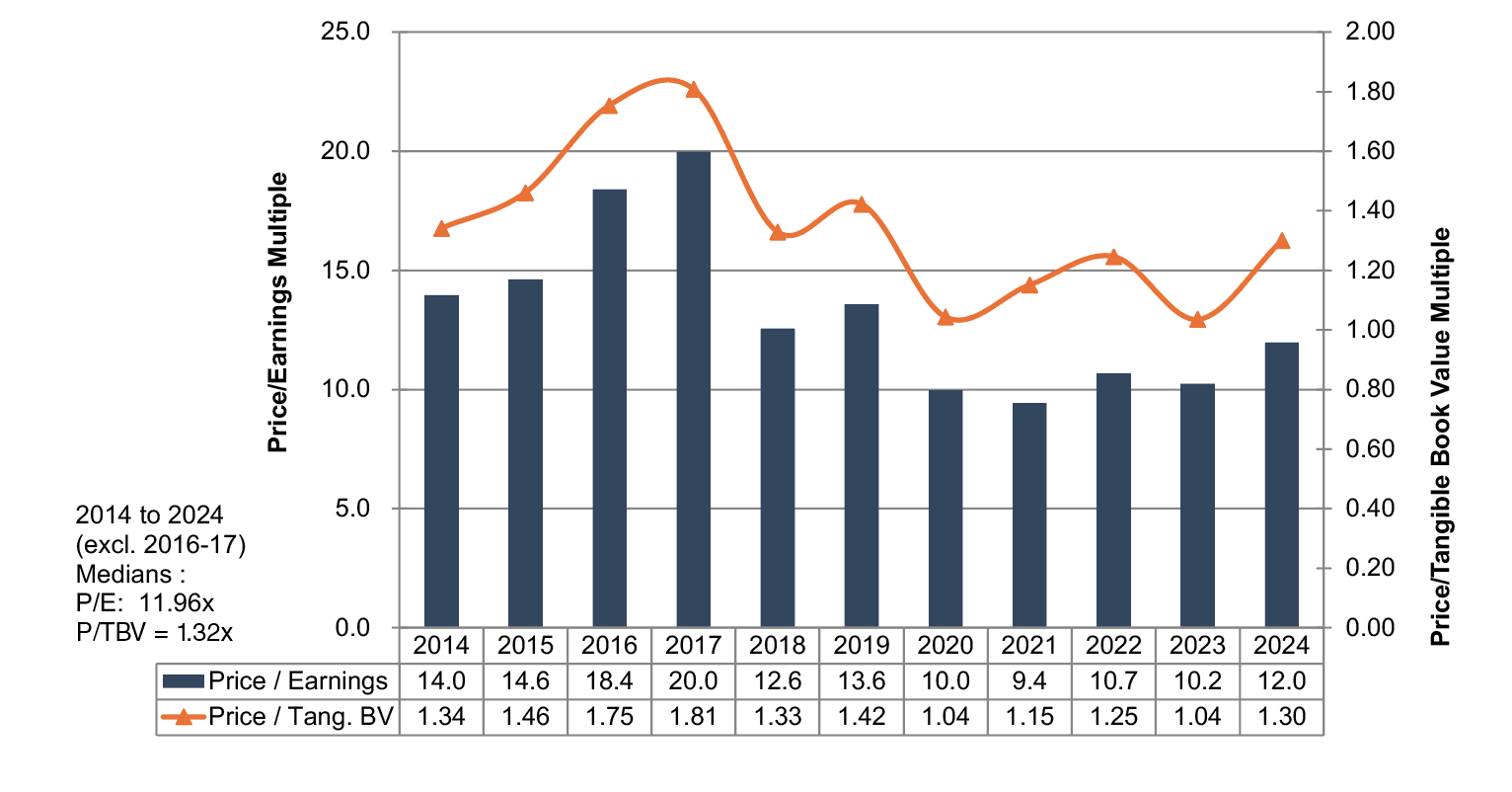

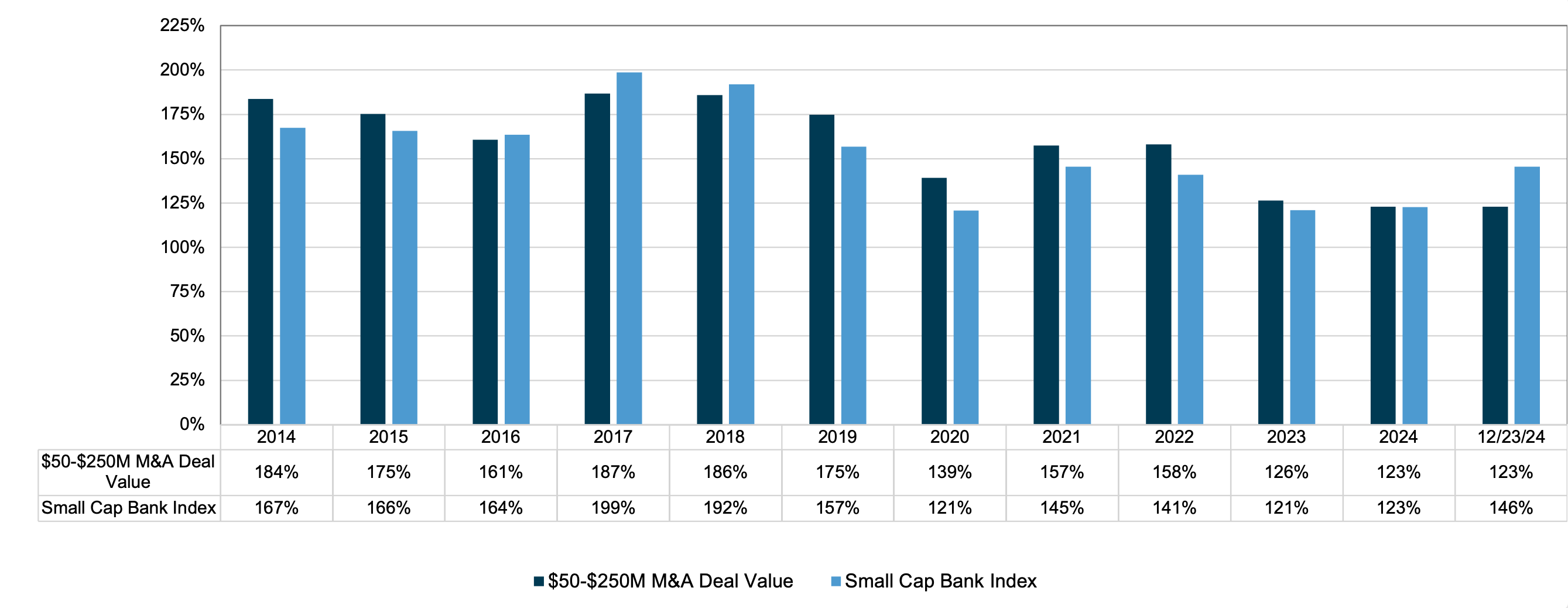

- Multiple compression also weighed on returns. As shown in the chart below, price/earnings multiples compressed between 2014 and 2024, while price/tangible book value multiples would have fallen to a greater extent absent the unrealized losses existing on securities portfolios.

Price/Earnings and Price/Tangible Book Value Multiples

Banks with Assets of $1 – $3 Billion & Return on Tang. Equity 7.5% – 15%

Dividends Make a Difference

In a period marked by moderate share price appreciation, dividends become a crucial component of shareholders’ total returns. As indicated in Table 1 below, the group of publicly traded banks generated a median total return of 114% over the 2014 to 2024 period, or a 7.9% annualized return. For the median bank, stock price appreciation accounted for 59% of investors’ total return, implying that the remainder came from dividends and the return on reinvested dividends.

Table 1

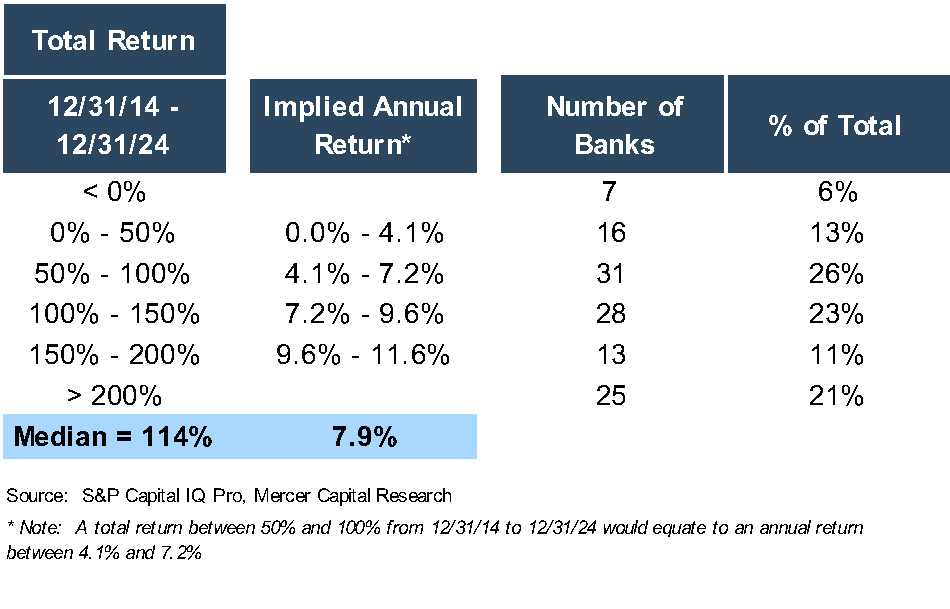

Table 2 stratifies the 120 banks included in the analysis by their total return. Almost one-half of the banks reported a total return between 50% and 150% over the 2014 to 2024 period, which equates to a mid to high single-digit annual return.

Table 2

Implications

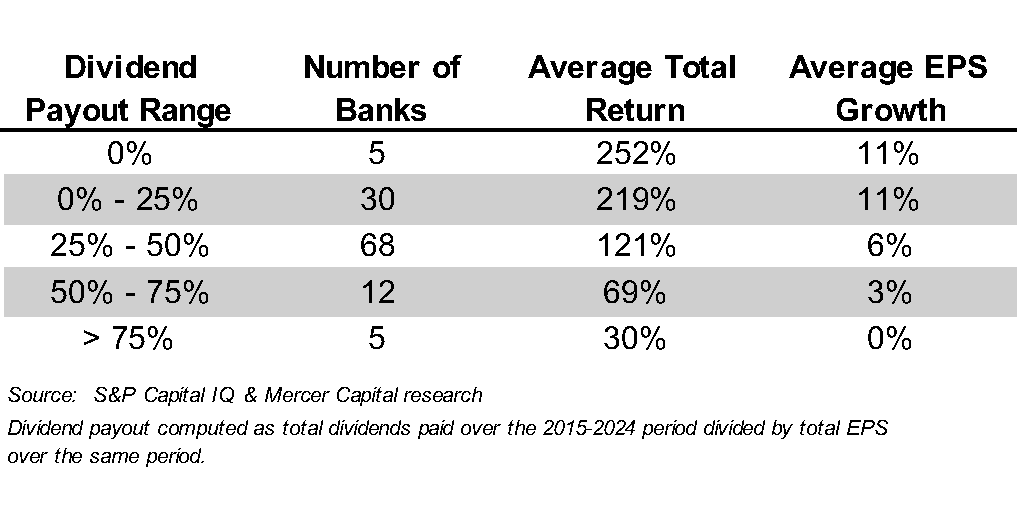

Does the impact of dividends on shareholder returns mean that bank management teams should immediately increase dividend payouts? Not necessarily, as markets reward growth in per share earnings and book value. Table 3 suggests that shareholder returns are negatively correlated with dividend payouts; that is, banks with the highest dividend payouts report the lowest shareholder returns. It is difficult to draw firm conclusions, though, as few banks in the analysis pay dividends of 0% or greater than 50%.

Table 3

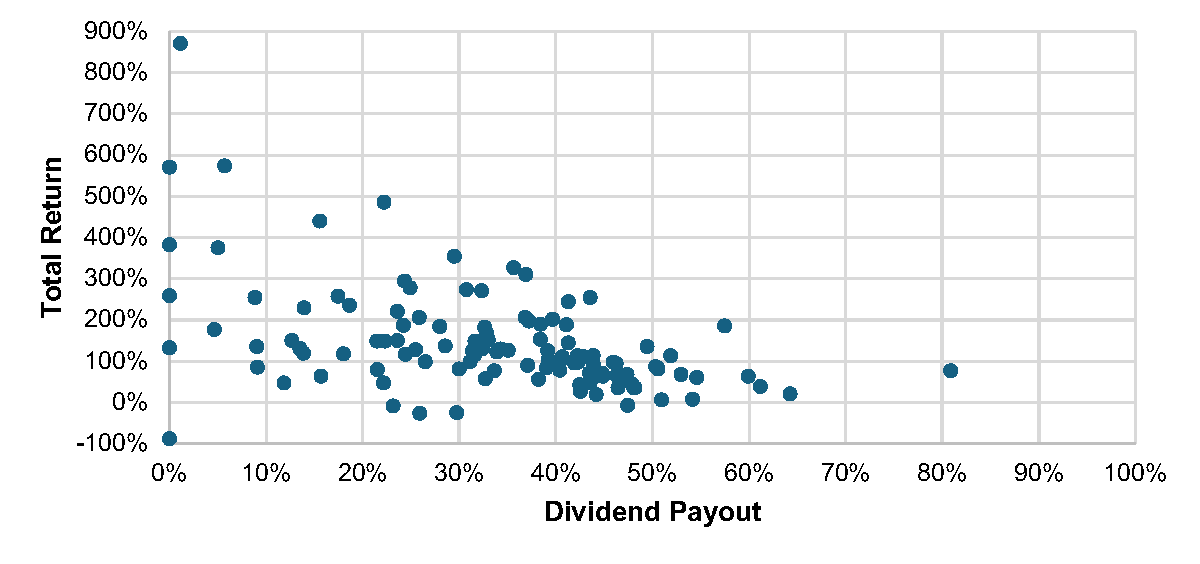

Figure 1 below compares dividend payout ratios and shareholder returns. The scatter plot suggests another factor influencing shareholder return: risk. Banks with low dividend payouts report widely dispersed returns, but returns tend to be more clustered for banks with dividend payouts in the 30% to 50% range. Dividend payout ratios are one way to estimate the potential volatility—and therefore the risk—of an investment, and investors want higher returns for assuming more risk.

Figure 1

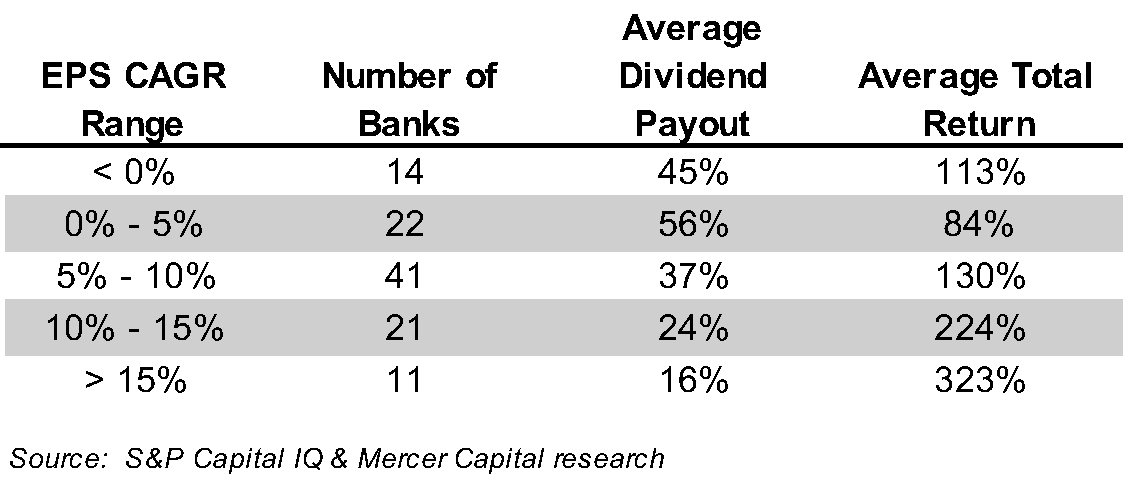

The relationship between shareholder returns and dividend payouts makes sense, as banks with high payouts often have fewer organic growth opportunities and, therefore lower EPS growth. Table 4 stratifies the data set by compound annual growth in EPS over the 2014 to 2024 period, whereby banks with the lowest EPS growth have the highest dividend payout ratios (and lower shareholder returns).

Table 4

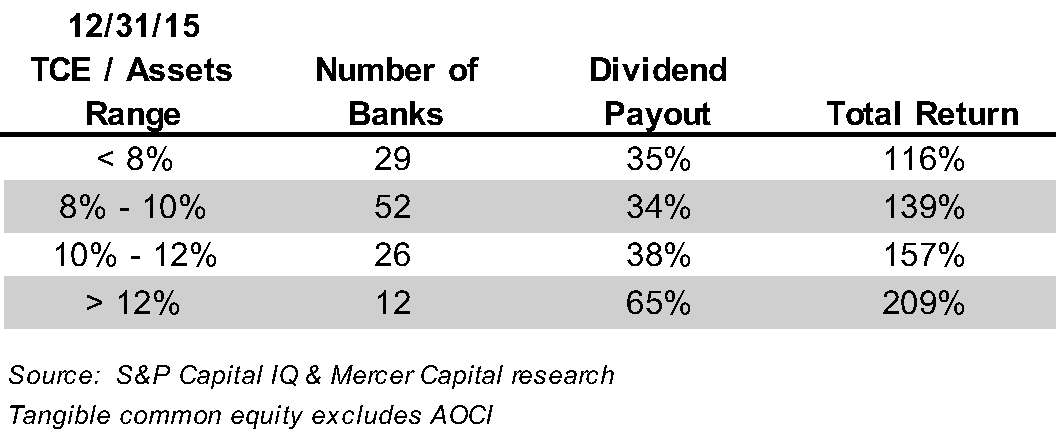

We also evaluated the relationship between the tangible common equity/assets ratio and dividend payouts. One may expect that banks with lower TCE/asset ratios have lower dividend payout ratios, but the data does not support this expectation. We note, though, that banks with excess capital, defined as TCE/asset ratios over 12%, tend to maintain higher dividend payout ratios. These banks can offer a relatively high payout, as well as maintain dry powder for opportunistic initiatives.

Table 5

Conclusions

The analysis shows that dividends, while an important component of shareholder returns, do not necessarily drive shareholder returns. Our analysis includes 120 banks, and 60 have dividend payout ratios between 30% and 50%. With dividend payout ratios clustered in a relatively tight range, it is difficult to make fine distinctions about dividend policies and shareholder returns. The implication is not that a bank with low EPS growth likely will enhance its shareholder returns by reducing its dividend payout ratio.

Over a long period, we know that shareholder value is created by growing earnings per share, which leads to rising tangible book value per share. Earnings also enable the dividend policy. Most banks have recognized, though, that they can both invest in growth opportunities and provide immediate return to shareholders through dividends. In challenging market environments, as occurred over the last ten years, this capital management strategy provides a material source of shareholder return.

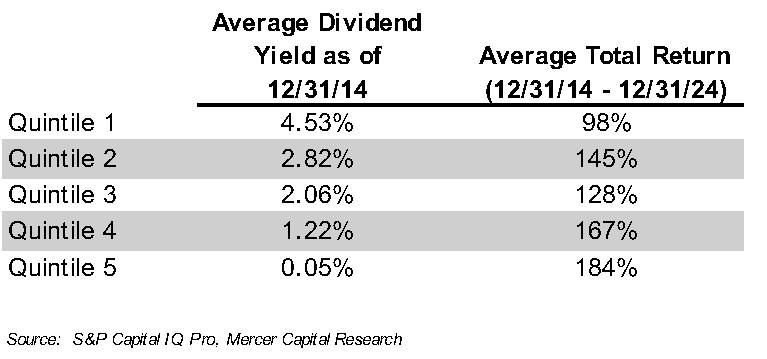

While BankWatch does not make investment recommendations, we noted a recent article in the Wall Street Journal regarding dividend investing. It proposed, with some historical support, sorting the S&P 500 into quintiles by dividend yield. The research suggests that the quintile with the highest dividend yield underperforms, as high yields often result from financial distress and suggest the risk of future dividend cuts. Rather, investors should invest in the quintile with the second highest dividend yield, which has been shown to outperform the S&P 500.

We replicated this investment strategy for our bank universe. We did find that banks with the highest dividend yields at year-end 2014 underperformed over the next ten years. However, we found no evidence that the second quintile outperformed

(Table 6).

Table 6

Mortgage Banking’s Next Chapter: Is a Recovery Taking Root?

A little over four years ago, we published a two-part series entitled Mortgage Banking Lagniappe (Lagniappe is the Cajun word for “bonus” or “a little extra”) as all-time lows in rates powered big mortgage earnings for banks and non-banks. Since then, a multi-year hangover has developed that causes us to ask: do rates have to fall materially for mortgage bank earnings to “normalize” and begin to contribute to bank earnings?

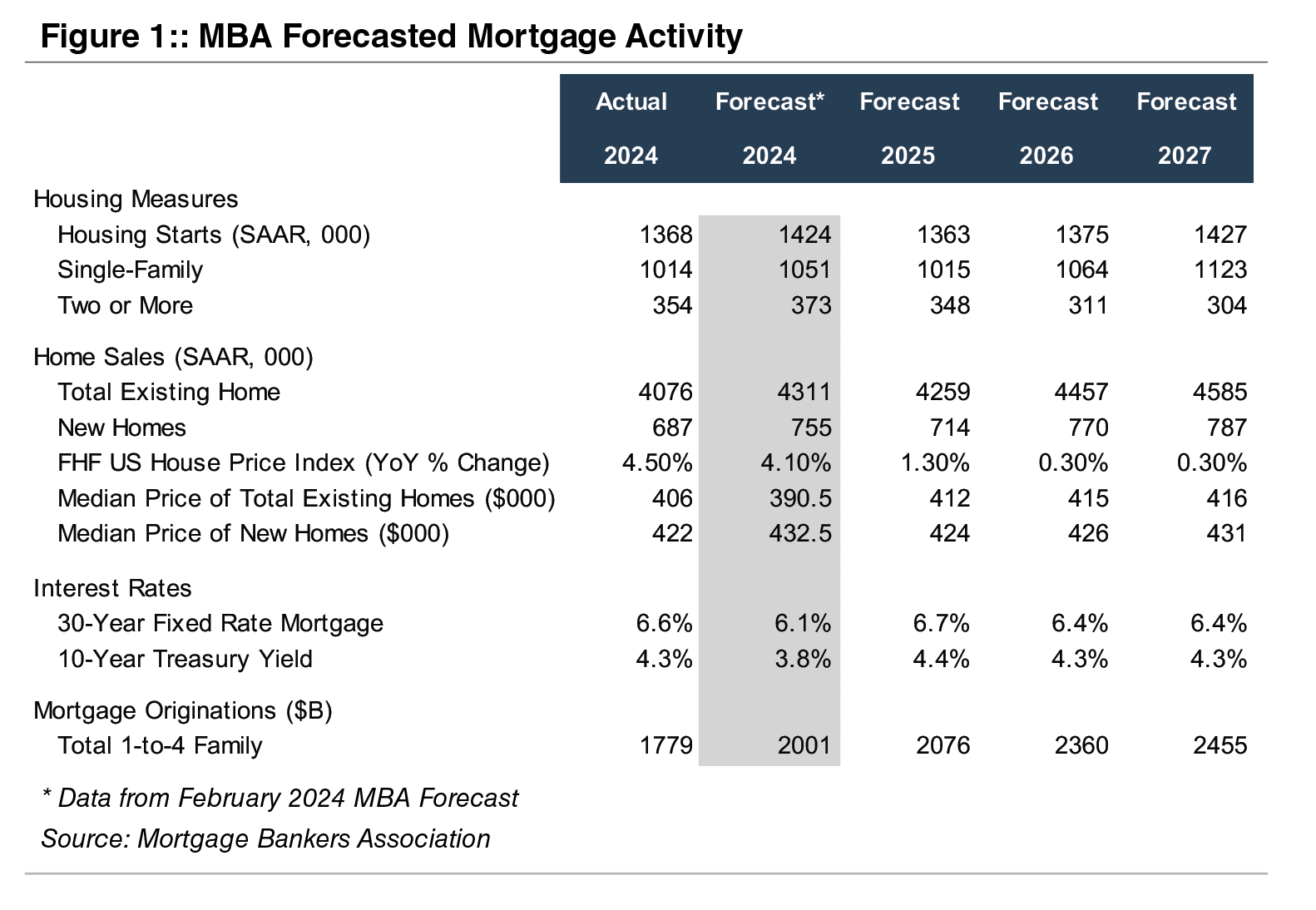

Figure 1, below, shows the level of and trends in key housing/mortgage data for 2024 and the Mortgage Banking Association’s (MBA) three-year forecast.

Like many forecasts, our observation of the MBA forecast tends to suffer from recency bias. Around year-end 2023, MBA’s forecast for 2024 projected a much stronger year than occurred because mortgage rates did not decline much even though the Fed cut its overnight policy rates by 100bps.

As a result, housing starts and sales of existing new homes were well below MBA’s forecast. However, the median price of existing homes was $406 thousand at year-end 2024, which equated to a 4.5% increase from the year prior and exceeded the 4.1% forecast increase.

Although inventories of unsold homes are increasing and in some areas are surging, MBA expects home prices to increase modestly in the coming three-year period as mortgage rates are projected to remain in the mid-6 range for a 30-year mortgage. By 2027, home sales are expected to be 12% above 2024 reported figures while mortgage volume is expected to increase nearly 40% as the refi-share picks up. If that comes to pass, mortgage banking earnings may transition to being accretive to commercial bank earnings.

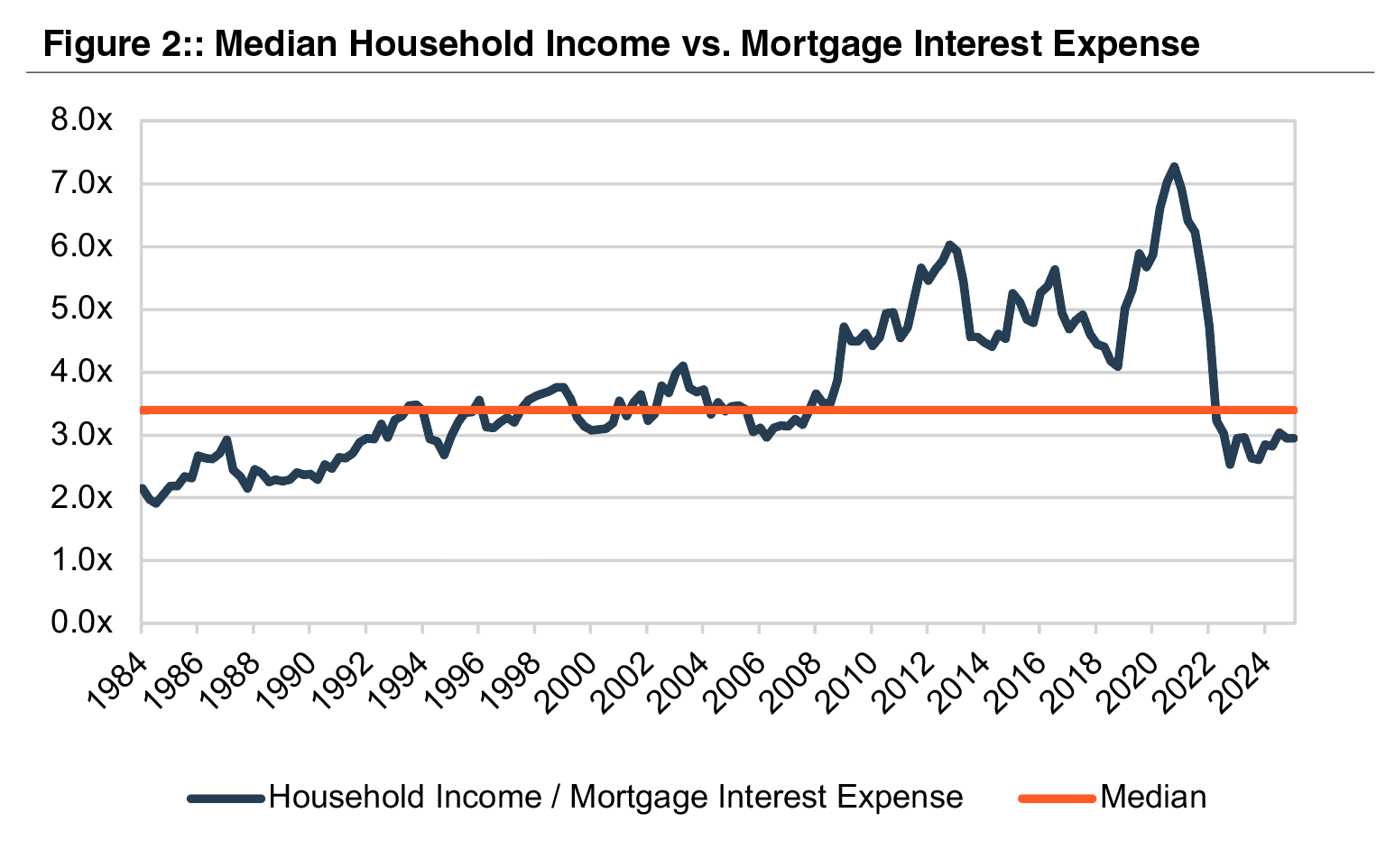

Mortgage rates were around 20% briefly in the early 1980s. Conversely houses were much cheaper. The analysis in Figure 2, on the next page, compares mortgage interest expense (using 30-year average mortgage rates and median U.S. transaction prices) to median household income, since the early 1980s.

As shown, housing was the most expensive at the beginning of this analysis when interest rates were in the mid-teens but trending lower. From 1984 to 1994, affordability improved as housing prices and income increased in-step but rates were nearly cut in half. From 1994 until the beginning of the GFC, relative affordability hugged the median (3.4x) as income and home prices, again, increased together while mortgage rates ranged from 6%-8%.

From the GFC until COVID, income growth outpaced the increase in housing prices while interest rates nearly halved, leading to improved affordability though post-GFC “reforms” made it more difficult to obtain a mortgage.

During COVID, the Fed engineered a sharp drop in long-term rates mortgage rates by buying over $2 trillion of Agency MBS. As a result, home prices skyrocketed but affordability as measured by household income as a multiple of mortgage interest expense rose to nearly 7x vs. a long-term average of 3.4x.

This was a bit of a mirage since 30-year mortgage rates for a while were below 3%; today it is around 6.5% after peaking near 8% in 2023. With normalization of rates since 2022, the household income-to-mortgage interest expense ratio has fallen back to near the long-term average of 3.4x yet housing is unaffordable for many.

So, it may take further increases in housing supply and a mild (or worse) recession to push mortgage rates lower and power a pick-up in mortgage refinancing and origination activity that would thereby drive better mortgage earnings. Some combinations of the following might work: rates down to the high 5s, housing prices decline 10-15%, or income increases 15-20%.

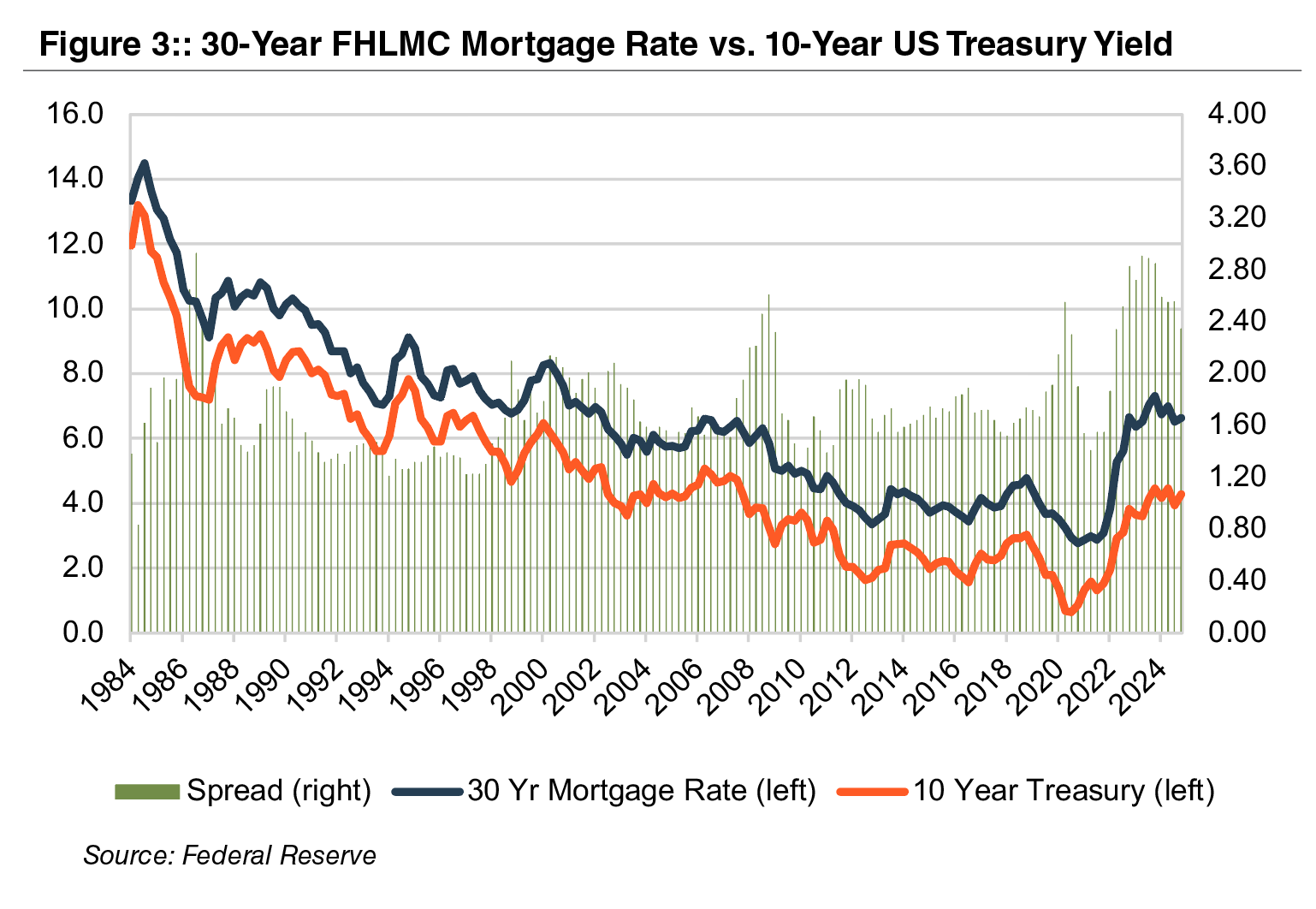

An additional technical factor in the market is working against an improvement in mortgage rates and therefore earnings derived from mortgage banking is the spread between the 10-year UST and mortgage rates.

During the past 40 years, the average spread was 175bps. Once the Fed engaged in “Quantitative Easing” in the post GFC years, the spread began to widen; however, it has gapped to around 250bps the past couple of years as MBS investors are concerned about the potential for the Fed to become an insensitive seller as it presumably needs to shrink its Agency MBS portfolio to make room for more USTs. A return to the 175bps spread average would, all else equal, cause mortgage rates to fall below 6%.

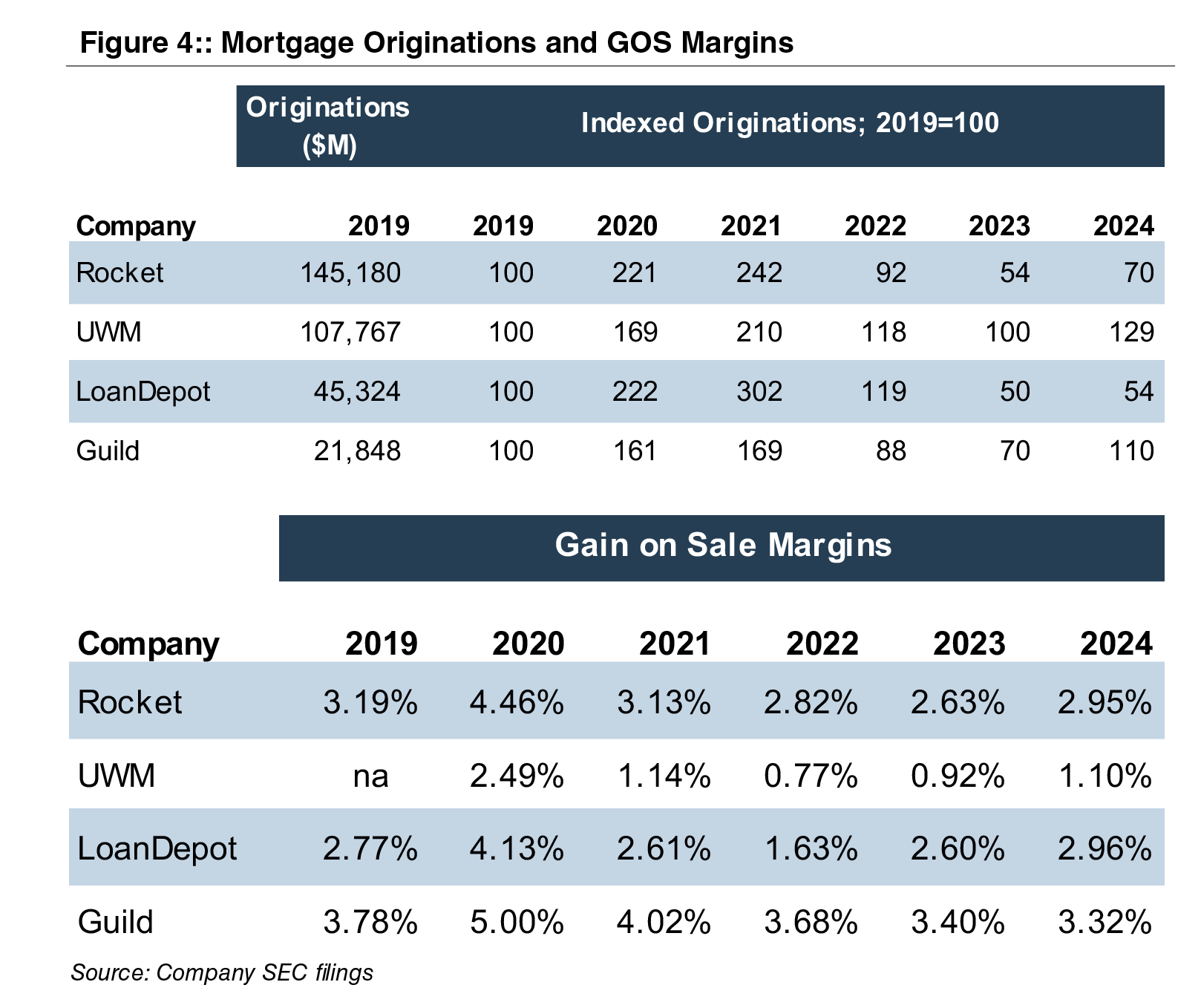

One result of the GFC reforms is that non-bank mortgage companies now originate the majority of residential mortgages. The data in Figure 4 succinctly summarizes the impact of falling and rising rates on origination volumes and gain on sale margins. Not shown is the share of originations that are refinancings, which, since 2022, when rates began to rise, are very low (and consumers switched to draws on HELOCS). Nonetheless, 2024 was somewhat better than 2023 when long-term rates peaked and 2025 may be somewhat better than 2024.

A vote of confidence in the future of mortgage banking has been registered by the largest originator, Rocket Companies, Inc. (NYSE:RKT). On March 10, 2025 RKT announced a $2.4 billion acquisition of Redfin Corporation (NASDAQ:RDFN), the online real estate brokerage business with 50 million monthly viewers. RKT then announced a $9.4 billion acquisition of Mr. Cooper Group Inc. (NASDAQ:COOP), bringing together the largest mortgage originator and servicer in the nation, on March 31. Consideration paid to both RDFN and COOP shareholders will consist solely of RKT common stock, which will result in about 25% ownership dilution to RKT shareholders.

The transaction may signal the bottom is in or maybe was put in 2023 with better times to come for mortgage banking and banks that have meaningful mortgage banking units.

Goodwill Impairment Troubles Cost UPS $45 Million

Executive Summary

A recent $45 million settlement between UPS and the SEC over allegedly flawed goodwill impairment tests and earnings overstatements puts a spotlight on the goodwill impairment testing process. Whether it be market volatility, uncertainty around tariffs, or simply poor performance at a reporting unit (as was the case for UPS), proper evaluation of goodwill impairment testing inputs is critical to getting the process and numbers right and potentially avoiding a fine. The impairment charge in this case may have been a non-cash item but the civil penalty was a real expense.

Summary of the Allegations and Settlement

In November 2024, the SEC announced settled charges and a civil penalty of $45 million against United Parcel Service, Inc. (UPS) for materially misrepresenting its earnings due to flawed goodwill impairment tests related to its UPS Freight reporting unit.

According to the SEC Order, UPS failed to share certain information with its external valuation consultant who prepared the goodwill impairment analyses in 2019 and 2020. When the unit was ultimately sold in early 2021, the sales price was far below the fair value used to support the goodwill. A timeline of key events and issues is summarized below:

- Mid-2019 – An internal UPS corporate strategy group worked with external financial advisors on a months-long process to evaluate whether to sell the underperforming Freight unit. This analysis concluded that the likely sales price for Freight would range from $350 million to $650 million. Meanwhile, the carrying value of the unit on the balance sheet was approximately $1.4 billion.

- July 1, 2019 Goodwill Impairment Test – The company’s annual testing date was July 1, with results of the assessment reported in its third quarter filing released on October 29, 2019. The SEC Order alleges that the assumptions used by the external valuation consultant (and approved by UPS management) differed substantially from those that market participants would use to value the business.

Specifically, the projections used for revenue and profit growth were “aggressive” and the selection of guideline public companies included companies that were “not comparable” to UPS. The resulting valuation from the external valuation consultant was approximately $2.0 billion. This was far higher than the corporate strategy group’s estimated range of $350 million to $650 million (which would have also resulted in a significant impairment charge). Notably, the corporate strategy group’s estimates and projections were not shared with the valuation consultant.

- June – October 2020 Sale Process – In June 2020, UPS began a process to sell its Freight business and soon began negotiations with a potential buyer. Internal documents again suggested that the sale price was unlikely to exceed $650 million. In September 2020, the prospective buyer made a non-binding offer to acquire the unit for between $750 and $800 million, subject to various adjustments which were anticipated to reduce the final net price. In October 2020, UPS and the buyer signed a non-binding term sheet to move forward with the transaction.

- July 1, 2020 Goodwill Impairment Test – The Company again conducted its annual impairment test with the assistance of the external valuation consultant. The SEC Order contends that UPS gave its consultant forecasts for the Freight unit that were inconsistent with how market participants would approach the business. Further, UPS did not share details regarding the proposed sale or existence of the signed term sheet with the consultant. The consultant ultimately valued the Freight unit at $2.0 billion relative to the carrying value of $1.3 billion.

On November 2, 2020, UPS released its third quarter 10-Q, noting that “there were no events or changes in circumstances during the third quarter of 2020 that would indicate the carrying amount of our goodwill may be impaired as of November 2, 2020.”

- Sale and Impairment – On November 3, 2020 (one day after filing its 10-Q for the third quarter), the Board of Directors authorized management to conclude a sale of the Freight unit on terms consistent with the term sheet. Management informed the board that it expected to incur a goodwill impairment charge on the order of $500 million upon closing of the transaction.

On January 25, 2021, UPS announced the sale of the Freight unit for $800 million, subject to working capital and other adjustments, which would result in a pre-tax impairment charge of approximately $500 million. The expected net sale price, after adjustments, was roughly $650 million. The impairment charge reduced net income for fiscal 2020 by 20%, and lowered balance sheet goodwill and shareholders’ equity by 13% and 32%, respectively.

According to the SEC Order, UPS made materially misleading disclosures in 2019 and 2020 regarding its financial reporting that were premised on its determination that no goodwill impairment charges were required for the Freight unit. Had UPS properly valued the Freight unit, its earnings and book value would have been materially lower.

In addition to the $45 million civil penalty, UPS (without admitting or denying the SEC’s findings), agreed to adopt training requirements for certain company individuals and retain an independent compliance consultant to review and make recommendations about the firm’s fair value estimates and disclosure obligations.

Implications and Takeaways

We’ve previously discussed the guidance for goodwill impairment testing under ASC 350 and best practices for conducting interim impairment tests. At a basic level, the financial projections and underlying fair value conclusion must reflect the expectations of market participants for the subject asset or reporting unit. But the UPS situation also illustrates a failure to follow the examples provided in the guidance when assessing events and circumstances that might make it more likely than not that an impairment condition exists. Specifically, entities should consider “changes in the carrying amount of assets at the reporting unit including the expectation of selling or disposing certain assets.”

The role of the external valuation consultant is to assist management in the preparation of a company’s financial statements for financial reporting. Obviously, no one wants to record an impairment charge, but withholding information from the valuation consultant or communicating misleading financial forecasts in the goodwill impairment testing process is not likely to end well. A non-cash impairment charge might sting, but not nearly as much as a $45 million cash settlement and other compliance penalties. This case is also a timely reminder for the valuation consultant assisting in an impairment test to ask – and management to answer, truthfully – whether the company is contemplating a sale of the subject reporting unit at the relevant measurement date(s).

Conclusion

The goodwill impairment testing process can be complex, particularly in times of market volatility and when certain business units have underperformed expectations. But the accounting guidance and valuation methodologies around fair value are well-established. The UPS case shows what can happen when the guidance and best practices are not followed.

The valuation specialists at Mercer Capital have experience in implementing both the qualitative and quantitative aspects of goodwill impairment testing under ASC 350 for public and private companies. If you have questions, please contact a member of Mercer Capital’s Financial Statement Reporting Group.

The Value of Carried Interest: A Guide for Matrimonial Litigation

Carried Interest Explained

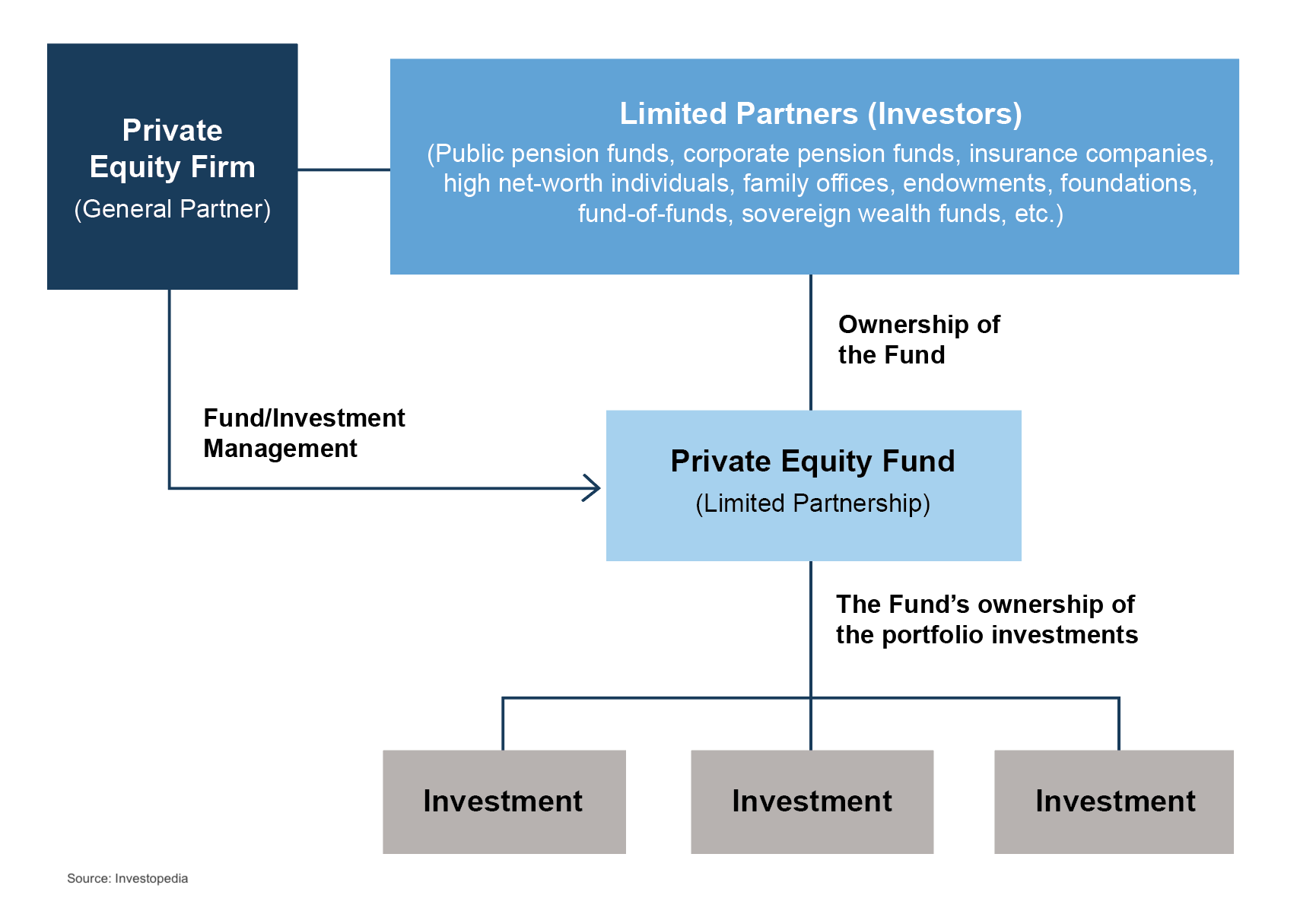

What is carried interest? It is the profits interest that a private equity, venture capital, or hedge fund principal receives if the fund exceeds certain performance benchmarks. Carried interest can also be referred to as performance allocation, incentive allocation, or promote interest in the case of real estate funds. Separately, fund principals also receive income and potential distributions from management fees and direct investments in the fund.

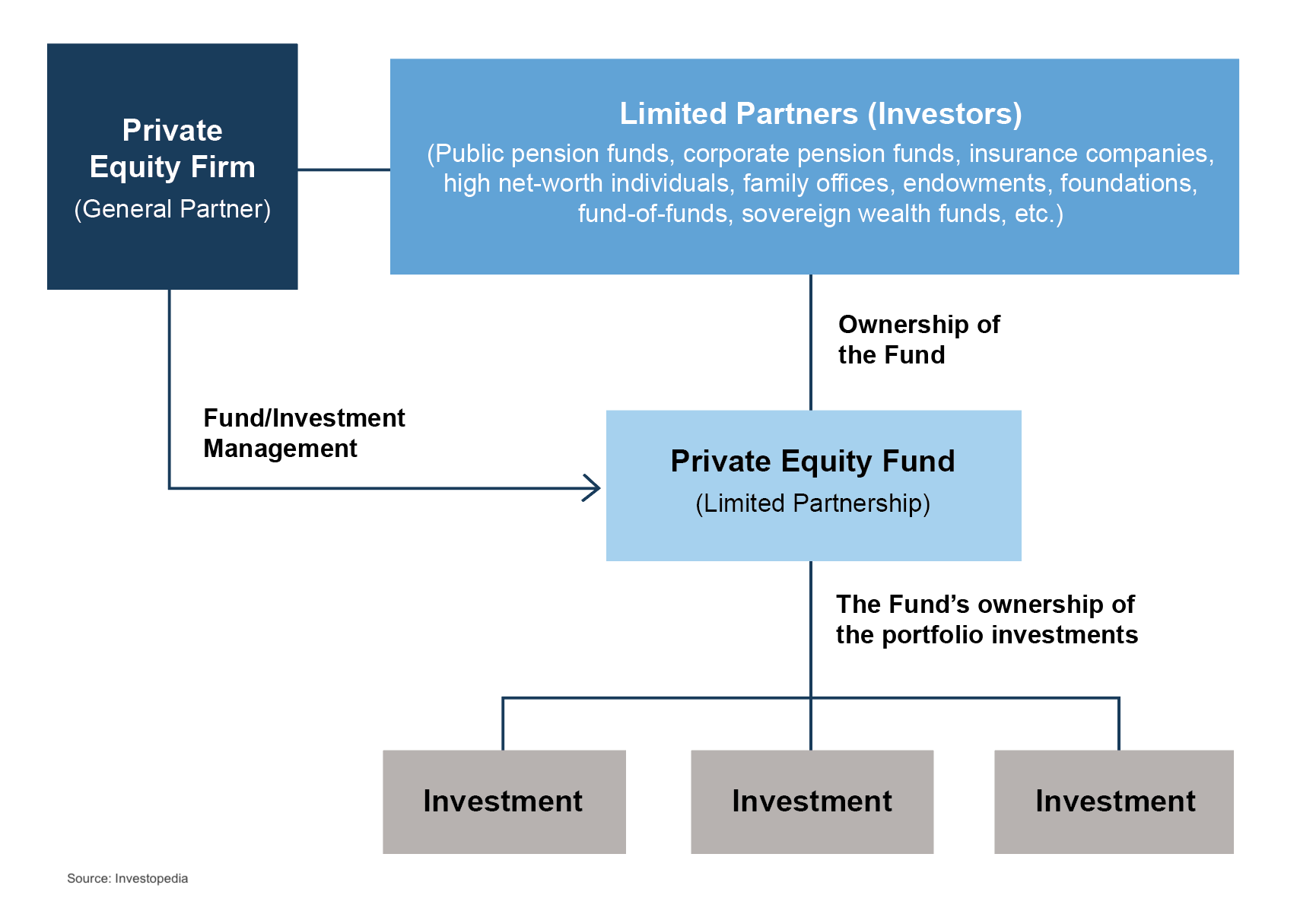

Fund Structures and Carried Interest Allocation

A basic private equity fund structure is shown below. Typically, the fund principals form a general partner entity and a management company entity. The principals then raise capital from limited partners and make investments over the term of the fund. The management company receives management fees, often around 2% per year, for investment management services provided. The general partner entity typically invests alongside the limited partners and would receive its pro-rata share of returns along with the limited partners. If the fund exceeds certain benchmarks, the general partner would also receive carried interest, often around 20% of fund returns beyond the benchmark.

It is important to note that private equity fund structures come in many forms, from basic to complex. Even though this article is focused primarily on private equity funds, similar concepts apply to certain hedge funds and venture capital funds.

Navigating Uncertainty and Valuation Challenges

Uncertainty exists regarding the fund’s performance, typically more so earlier in the fund’s life. When the fund entities are formed, and before capital is raised, there is also uncertainty regarding how much capital will be raised. These uncertainties result in lower values for the fund entities at inception. The fund entity values will increase throughout the life of the fund if the fund is able to successfully raise capital and achieve investment returns beyond the benchmark to generate carried interest cash flows.

At inception, the values of the fund entities will be relatively low, particularly the value of potential carried interest. As the fund raises capital and then begins to make investments, the values of the fund entities will change, potentially significantly.

Challenges in Valuing Interests in Fund Entities

A appraisal prepared by a qualified appraiser with prerequisite experience is paramount when determining the value of fund entity interests given the complexity involved. In addition to fund structure, other items that require appraiser familiarity with carried interest include the following:

- Fundraising: For new funds, what is the fund’s target size? And will the fund offer special terms for early investors or for a large “anchor” investor? For funds already operating, how much capital has been raised and how much additional capital does the fund expect to raise? And are there different classes of investors with different terms?

- Fund investment strategy: For new funds, what types of investments will the fund make in terms of asset classes, industries, size of interests, number of investments, etc.? How will the fund’s investments shape expected returns for the fund? What is the expected holding period for the fund’s investments? Will capital from the sales of investments be reinvested? Will the fund use leverage? And for funds already operating, what types of investments does the fund hold? What have the returns been to date? What is the outlook for the fund’s investments in terms of future returns and remaining holding periods?

- Fund terms: How is the management fee calculated and how often will it be assessed? Who will pay for fund expenses? Is there a general partner catch-up after the hurdle is reached? Are distributions made on a deal-by-deal basis, or on a cumulative basis? Can the general partner waive management fees associated with their direct investments in the fund? And for funds already operating, has any carried interest been accrued yet?

Funds already operating can be more complicated to value than new funds. In addition to understanding all of these items, the fund’s performance to date has to be reconciled with expectations going forward.

TIP: For new funds, the Private Placement Memorandum will typically be a good starting point to answer many of these questions. Additionally, governing documents for the limited partnership, the general partnership, the management company, and any other entities related to the fund will be useful. For existing funds, financial statements for each of the entities are needed to value the entities, in addition to the information needed for new funds.

Conclusion

In conclusion, valuing fund entities, including carried interest, in newly formed or existing funds requires a complex and thorough analysis. It is essential to work with qualified appraisers familiar with the complexities of fund structures, tax regulations, and local matrimonial law statutes to ensure optimal outcomes. Engaging with experienced advisors can increase the likelihood of an equitable outcome in divorce.

The professionals at Mercer Capital have experience in the valuation of carried interests. For more information or to discuss a valuation issue, feel free to contact us.

Medical Device Industry Outlook – Five Long-Term Trends to Watch

Medical Devices Overview

The medical device manufacturing industry produces equipment designed to diagnose and treat patients. Medical devices range from simple tongue depressors and bandages to complex programmable pacemakers and sophisticated imaging systems. Major product categories include surgical implants and instruments, medical supplies, electro-medical equipment, in-vitro diagnostic equipment and reagents, irradiation apparatuses, and dental goods.

The following outlines five structural factors and trends that influence demand and supply of medical devices and related procedures.

1. Demographics

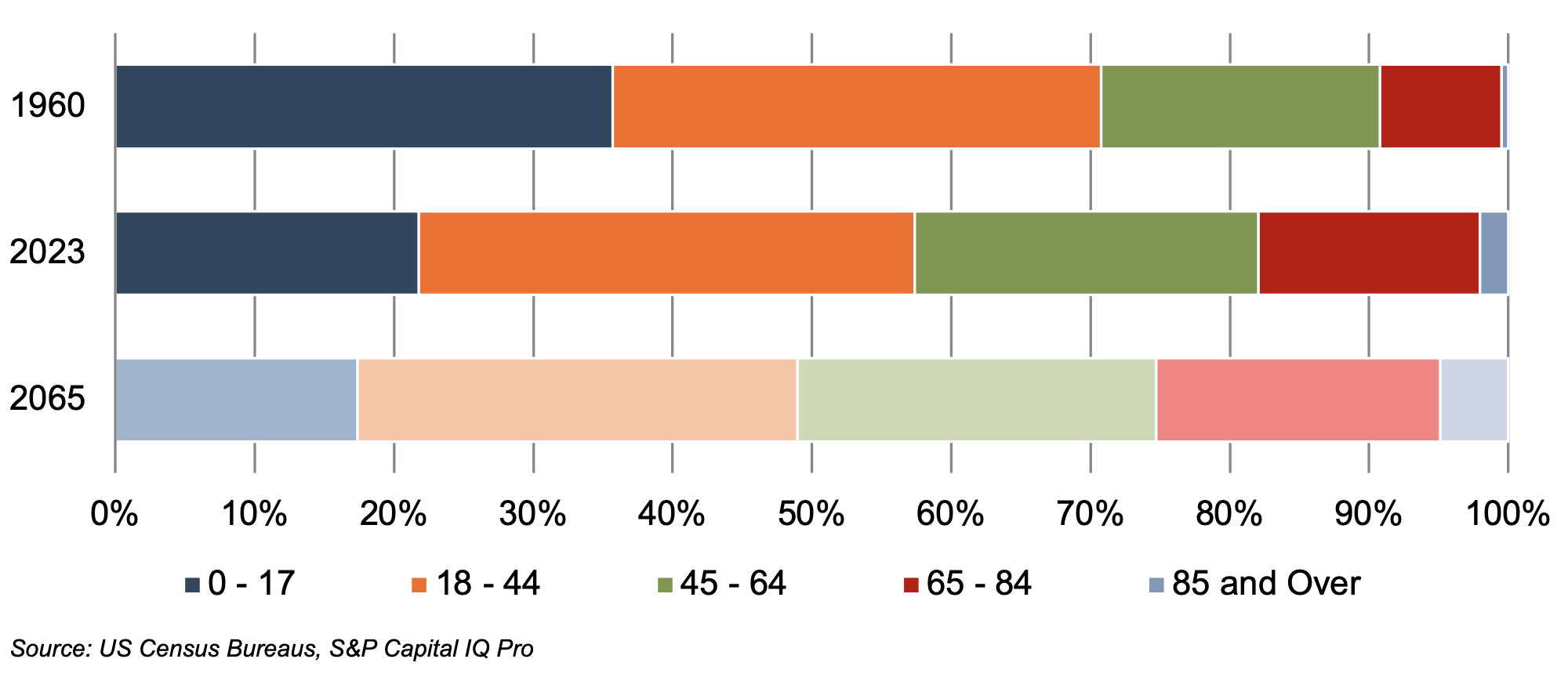

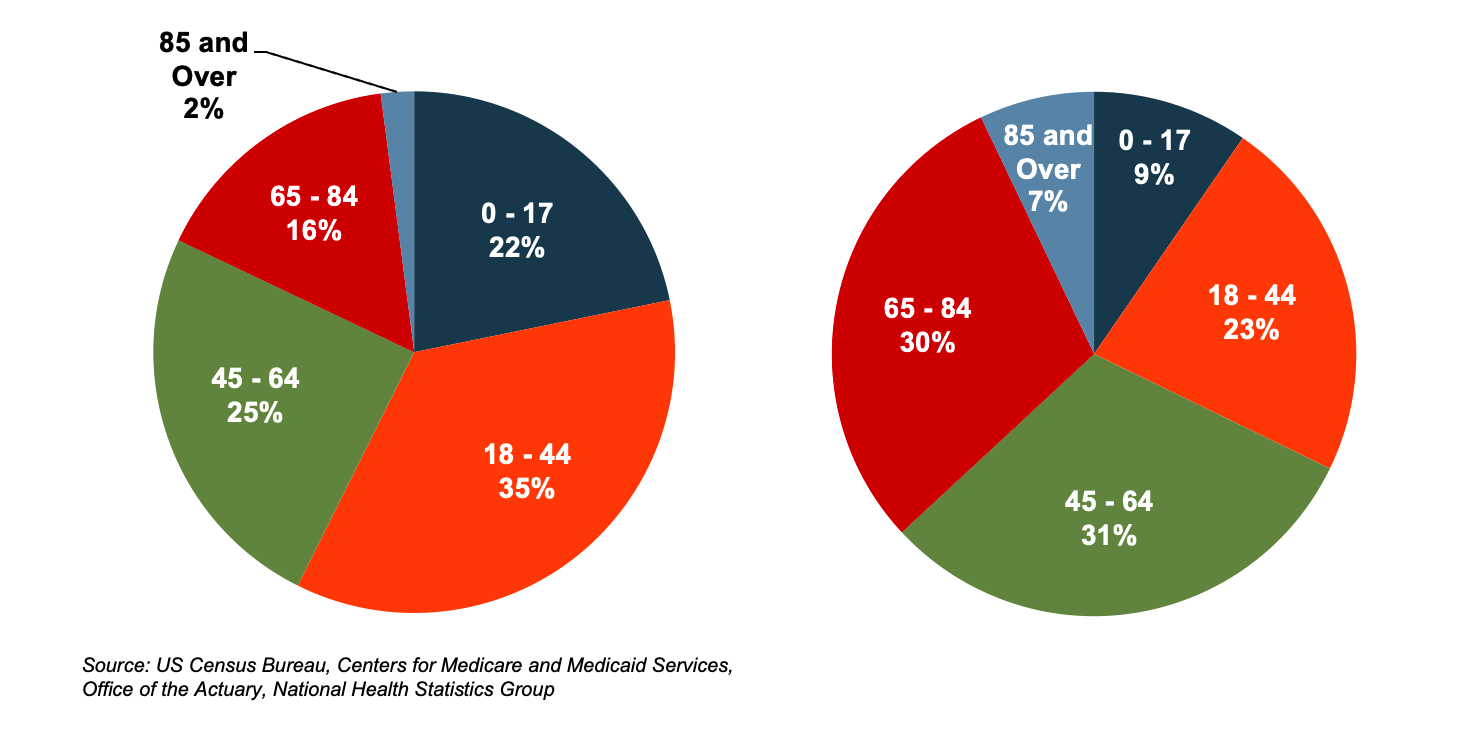

The aging population, driven by declining fertility rates and increasing life expectancy, represents a major demand driver for medical devices. The U.S. elderly population (persons aged 65 and above) totaled 60.0 million in 2023 (18% of the population). The U.S. Census Bureau estimates that the elderly will number 92.7 million by 2065, representing more than 25% of the total population.

The elderly account for nearly one third of total healthcare consumption in the U.S. Personal healthcare spending for the population segment was approximately $22,000 per person in 2020, 5.5 times the spending per child (about $4,000) and more than double the spending per working-age person (about $9,000).

U.S. Population Distribution by Age Group

(Left) U.S. Population Distribution by Age Group

(Right) U.S. Healthcare Cost Distribution by Age Group

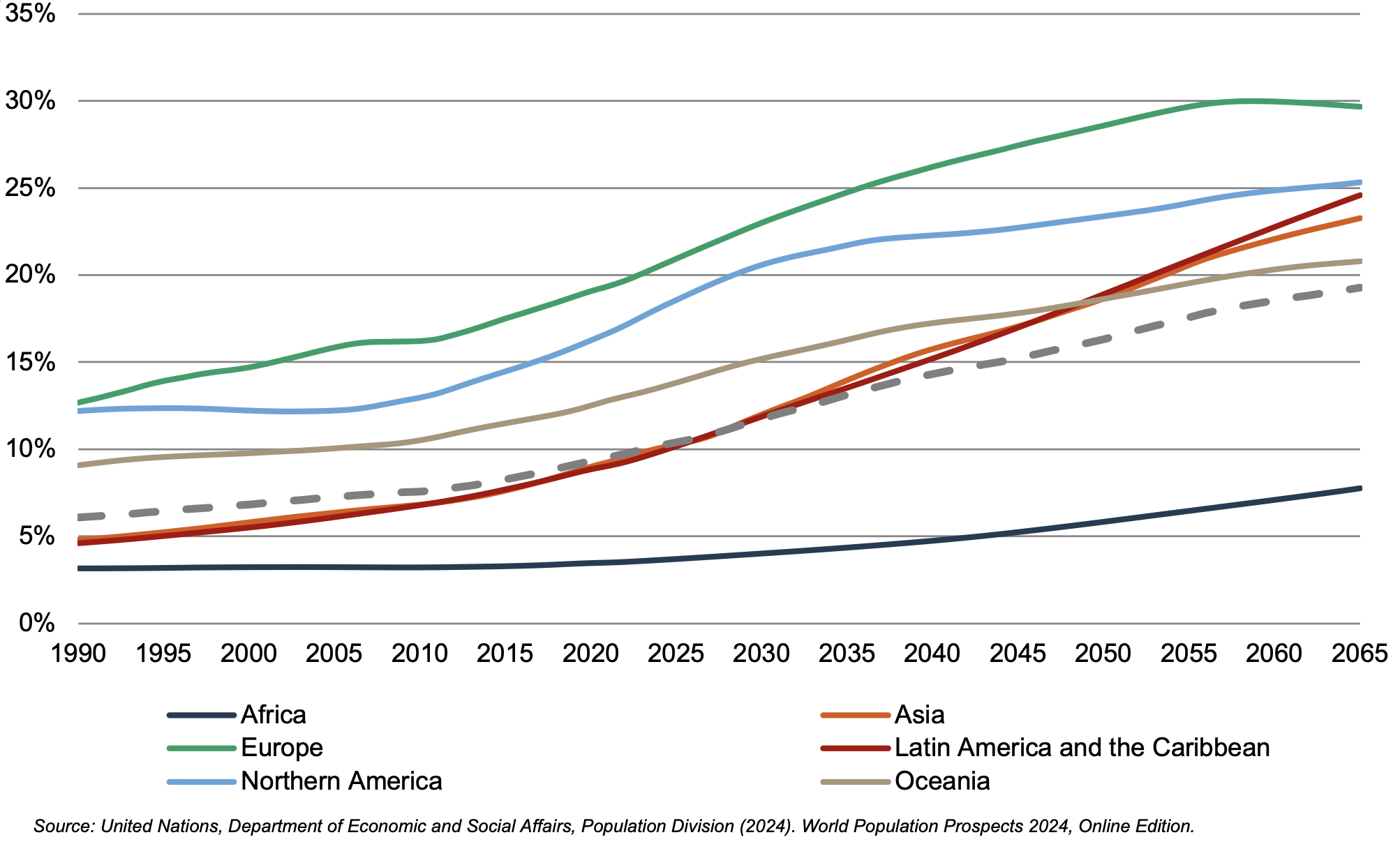

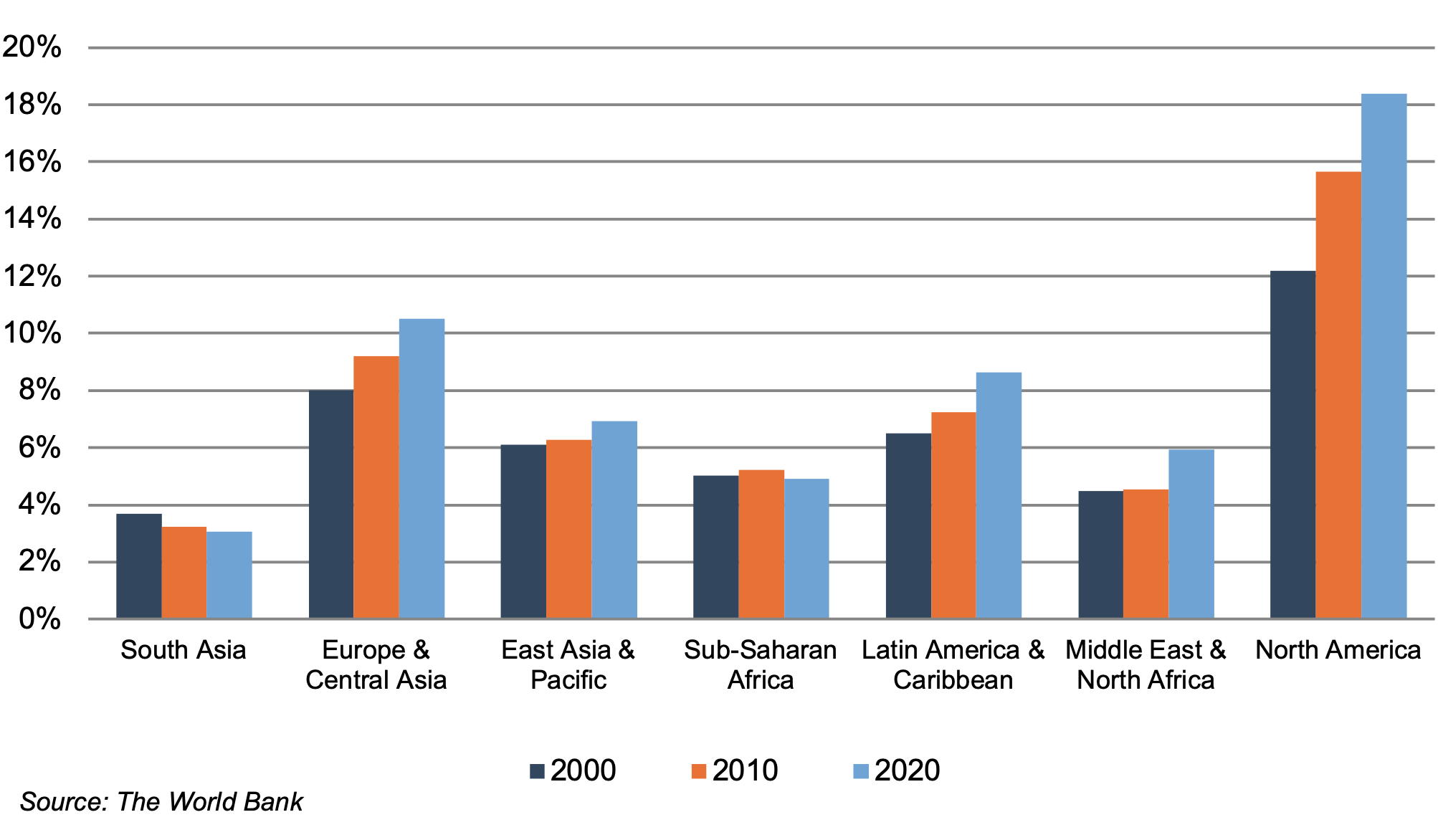

World Population 65 and Over (% of Total)

According to United Nations projections, the global elderly population will rise from approximately 809.0 million (10% of world population) in 2023 to 1.9 billion (19.3% of world population) in 2065. Europe’s elderly made up 20.1% of the total population in 2023, and the proportion is projected to reach 29.7% by 2065, making it the world’s oldest region. Latin American and the Caribbean is currently one of the youngest regions in the world, with its elderly at 9.5% of the total population in 2023, but this region is expected to undergo drastic transformation over the next several decades, with the elderly population expected to expand to 24.6% of the total population by 2065. North America has an above-average elderly population as of 2023 (17.6%) and is projected to expand to 25.3% by 2065.

2. Healthcare Spending and the Legislative Landscape in the U.S.

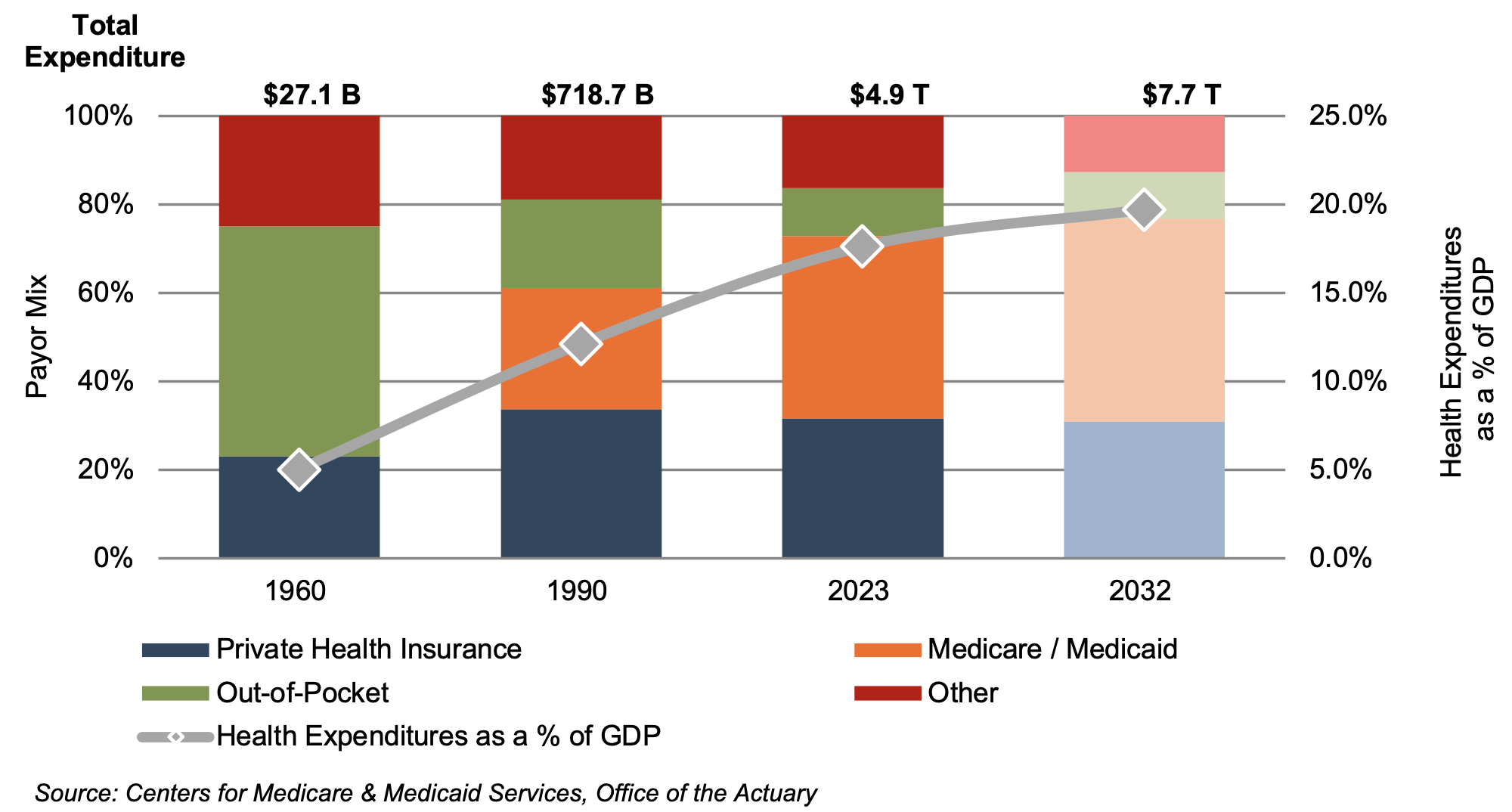

Demographic shifts underlie the expected growth in total U.S. healthcare expenditure from $4.9 trillion in 2023 to $7.7 trillion in 2032, an average annual growth rate of 5.6%. This projected average annual growth rate is slightly higher than the observed rate of 5.1% between 2013 and 2022, implying some acceleration in expected spending. Projected growth in annual spending for Medicare (average annual growth of 7.0%) and Medicaid (average annual growth of 5.2%) is expected to contribute substantially to the increase in national health expenditure over the coming decade. Growth in national healthcare spending, after significant growth in 2020 of 10.6%, slowed to 3.2% in 2021 and 4.1% in 2022 before increasing to 7.5% in 2023. Healthcare spending as a percentage of GDP is expected to increase from 17.6% in 2023 to 19.7% by 2032.

U.S. Healthcare Consumption Payor Mix and as a % of GDP

Since inception, Medicare has accounted for an increasing proportion of total U.S. healthcare expenditures. Medicare currently provides healthcare benefits for an estimated 65 million elderly and disabled people, constituting approximately 10% of the federal budget in 2021. Medicare spending growth is expected to average 7.1% from 2025 to 2026. Medicare represents the largest portion of total healthcare costs, constituting 21% of total health spending in 2021. Medicare accounts for 26% of spending on hospital care, 26% of physician and clinical services, and 32% of retail prescription drugs sales.

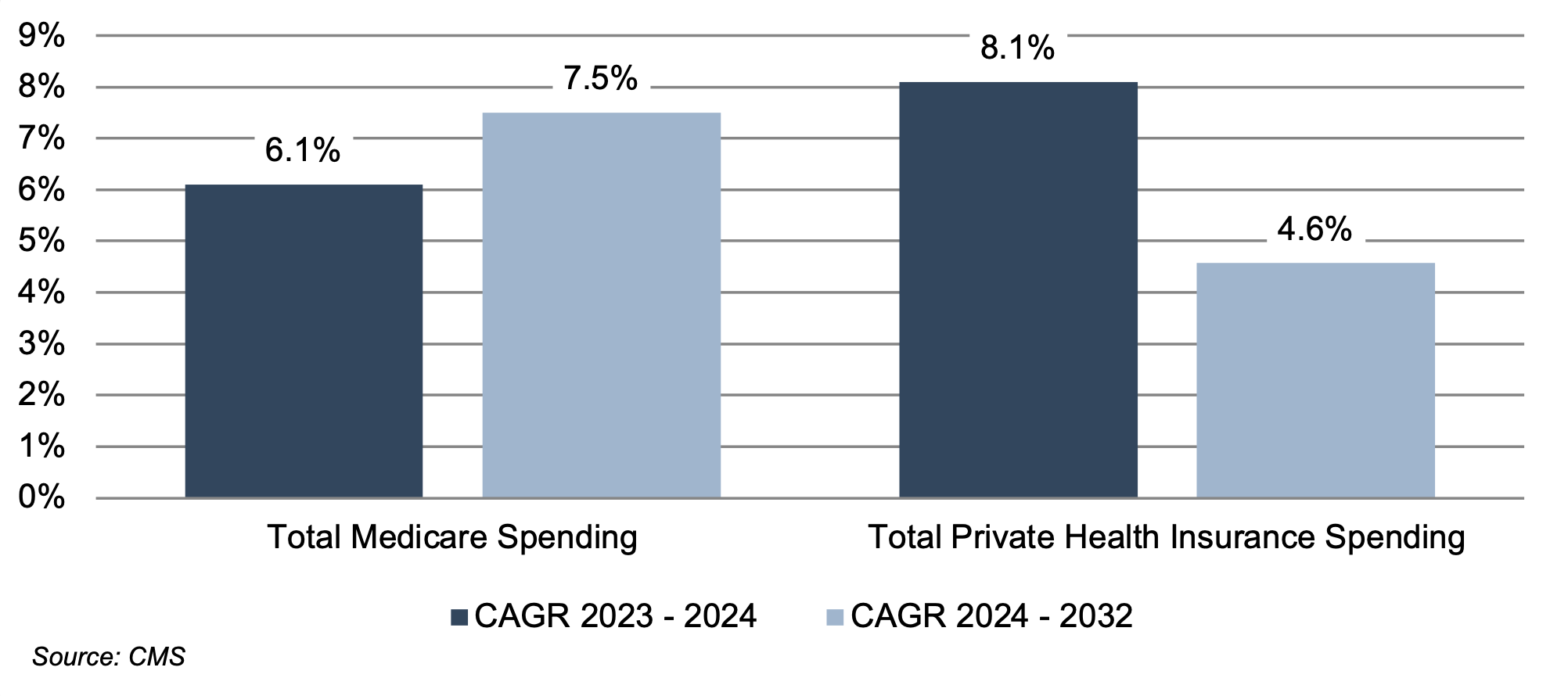

Average Spending Growth Rates, Medicare and Private Health Insurance

Due to the growing influence of Medicare in aggregate healthcare consumption, legislative developments can have a potentially outsized effect on the demand and pricing for medical products and services. In early 2025, there were indications of potential scrutiny of Medicare and Medicaid. Future updates of this outlook will incorporate any changes that may occur. Total Medicare spending totaled $1.0 trillion in 2023 and is expected to reach $1.9 trillion by 2032.

The Inflation Reduction Act (“IRA”) was signed into law in August 2022 by the Biden administration. Among other items, the IRA aims to lower prescription drug costs and improve access to prescription drugs for Medicare enrollees. Two healthcare spending-related items in the IRA include out-of-pocket caps for insulin products (capped at $35 for each monthly subscription under Part D and Part B) and a $2,000 out-of-pocket annual spending cap for drugs under Medicare Part D. These provisions could have significant effects on the growth rates for out-of-pocket spending for prescription drugs, which are projected to decline by 5.9% and 4.2% 2024 and 2025, respectively.

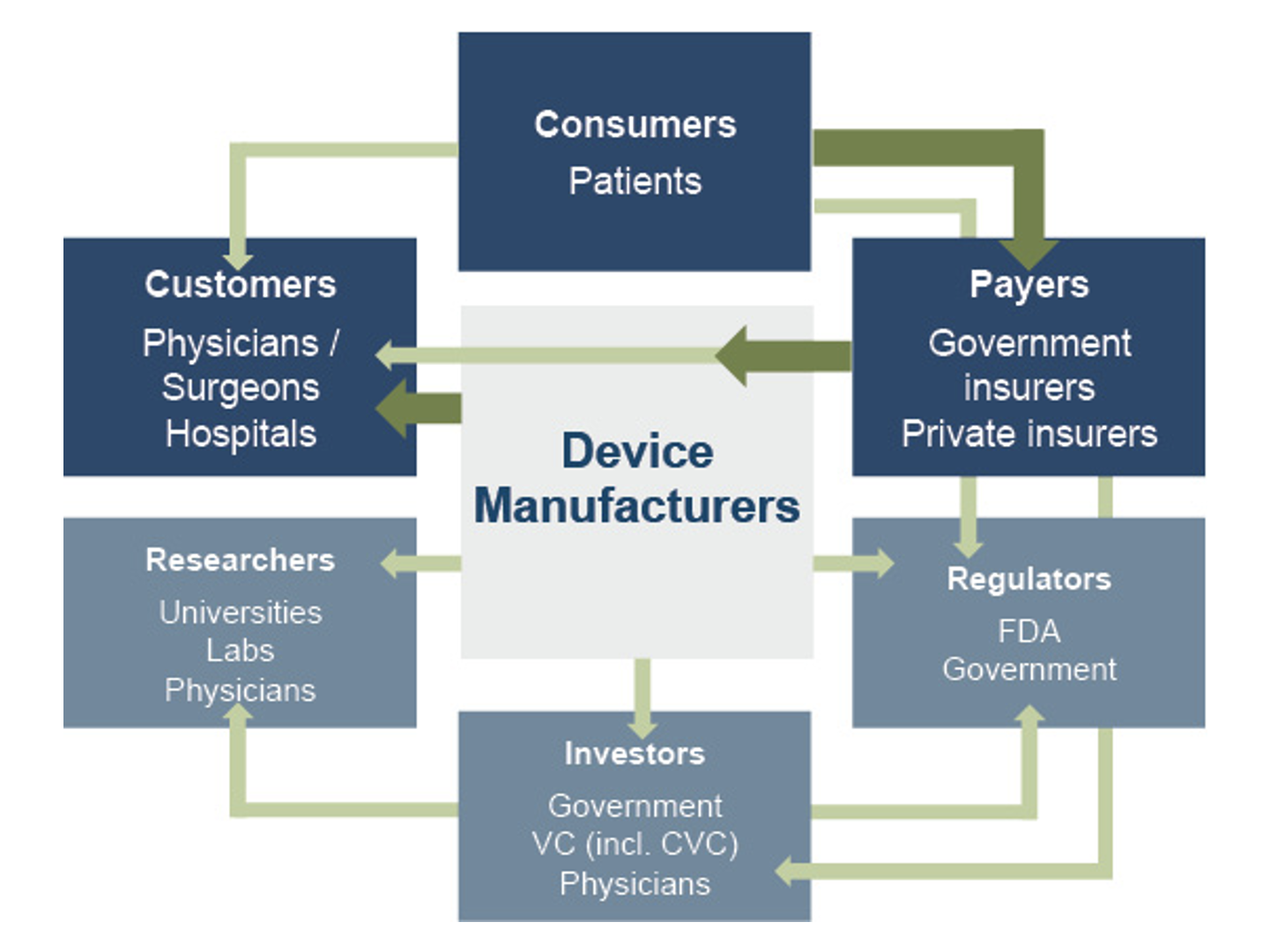

3. Third-Party Coverage and Reimbursement

The primary customers of medical device companies are physicians (and/or product approval committees at their hospitals), who select the appropriate equipment for the consumers (patients). In most developed economies, the consumers themselves are one (or more) step removed from interactions with manufacturers, and therefore pricing of medical devices. Device manufacturers ultimately receive payments from insurers, who usually reimburse healthcare providers for routine procedures (rather than for specific components like the devices used). Accordingly, medical device purchasing decisions tend to be largely disconnected from price.

Third-party payors (both private and government programs) are keen to reevaluate their payment policies to constrain rising healthcare costs. Hospitals and other care settings form the largest market for medical devices. Lower reimbursement growth will likely persuade hospitals to scrutinize medical purchases by adopting i) higher standards to evaluate the benefits of new procedures and devices, and ii) a more disciplined price bargaining stance.

The transition of the healthcare delivery paradigm from fee-for-service (FFS) to value models is expected to lead to fewer hospital admissions and procedures, given the focus on cost-cutting and efficiency. In 2015, the Department of Health and Human Services (HHS) announced goals to have 85% and 90% of all Medicare payments tied to quality or value by 2016 and 2018, respectively, and 30% and 50% of total Medicare payments tied to alternative payment models (APM) by the end of 2016 and 2018, respectively. A report issued by the Health Care Payment Learning & Action Network (HCPLAN), a public-private partnership launched in March 2015 by HHS, found that 33.7% of (traditional) Medicare payments were tied to APMs categorized as 3B and above in 2023, compared to 30.2% in 2022. HCPLAN has set goals of reaching 50% in 2024, 60% in 2025 and 100% in 2030. These goals are aligned with the CMS Innovation Center (CMMI) goal of having 100% of traditional Medicare beneficiaries with Parts A and B in care relationships with accountability for quality and total cost of care.

In 2020, CMS released guidance for states on how to advance value-based care across their healthcare systems, emphasizing Medicaid populations, and to share pathways for adoption of such approaches. CMS states that value-based care advances health equity by putting focus on health outcomes of every person, encouraging health providers to screen for social needs, requiring health professionals to monitor and track outcomes across populations, and engaging with providers who have historically worked in underserved communities. Ultimately, lower reimbursement rates and reduced procedure volume will likely limit pricing gains for medical devices and equipment.

The medical device industry faces similar reimbursement issues globally, as the European Union (EU) and other jurisdictions face similar increasing healthcare costs. A number of countries have instituted price ceilings on certain medical procedures, which could deflate the reimbursement rates of third-party payors, forcing down product prices. Industry participants are required to report manufacturing costs, and medical device reimbursement rates are set potentially below those figures in certain major markets like Germany, France, Japan, Taiwan, Korea, China, and Brazil. Whether third-party payors consider certain devices medically reasonable or necessary for operations presents a hurdle that device makers and manufacturers must overcome in bringing their devices to market.

4. Competitive Factors and Regulatory Regime

Historically, much of the growth of medical technology companies has been predicated on continual product innovations that make devices easier for doctors to use and improve health outcomes for the patients. Successful product development usually requires significant R&D outlays and a measure of luck. If viable, new devices can elevate average selling prices, market penetration, and market share.

Government regulations curb competition in two ways to foster an environment where firms may realize an acceptable level of returns on their R&D investments. First, firms that are first to the market with a new product can benefit from patents and intellectual property protection giving them a competitive advantage for a finite period. Second, regulations govern medical device design and development, preclinical and clinical testing, premarket clearance or approval, registration and listing, manufacturing, labeling, storage, advertising and promotions, sales and distribution, export and import, and post market surveillance.

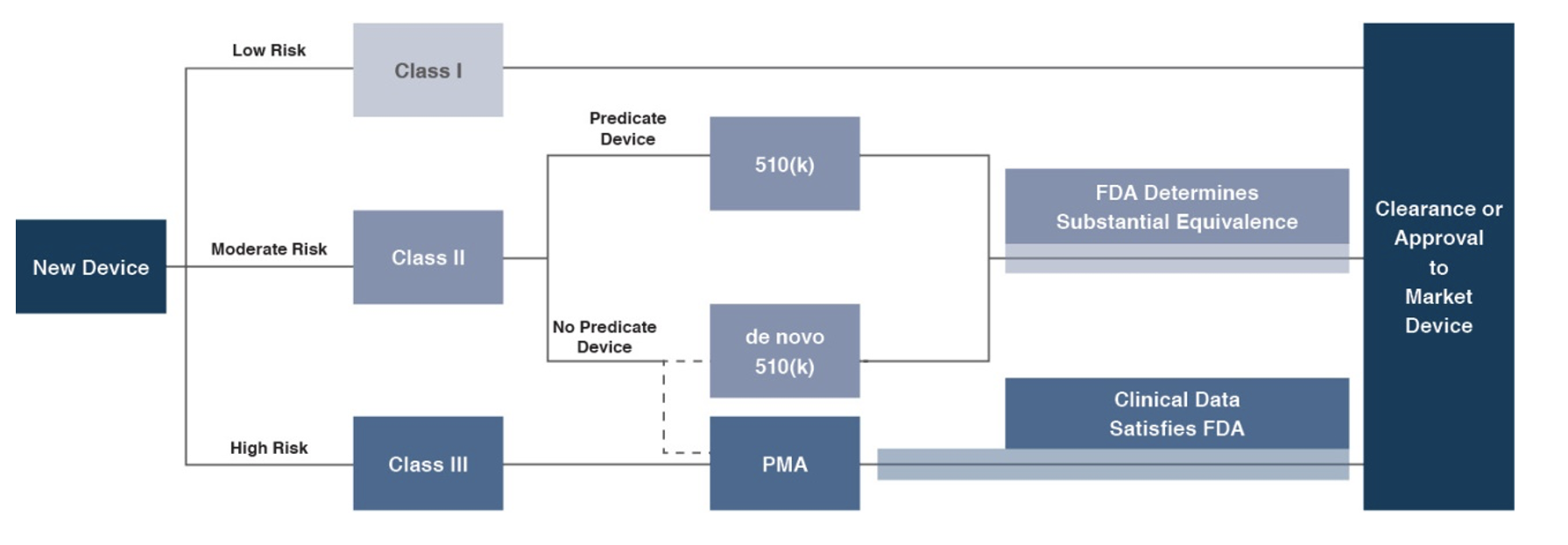

Regulatory Overview in the U.S.

In the U.S., the FDA generally oversees the implementation of the second set of regulations. Some relatively simple devices deemed to pose low risk are exempt from the FDA’s clearance requirement and can be marketed in the U.S. without prior authorization. For the remaining devices, commercial distribution requires marketing authorization from the FDA, which comes in primarily two flavors.

1) The premarket notification (“510(k) clearance”) process requires the manufacturer to demonstrate that a device is “substantially equivalent” to an existing device (“predicate device”) that is legally marketed in the U.S. The 510(k) clearance process may occasionally require clinical data and generally takes between 90 days and one year for completion. In November 2018, the FDA announced plans to change elements of the 510(k) clearance process. Specifically, the FDA plan includes measures to encourage device manufacturers to use predicate devices that have been on the market for no more than 10 years. In early 2019, the FDA announced an alternative 510(k) program to allow medical devices an easier approval process for manufacturers of certain “well-understood device types” to demonstrate substantial equivalence through objective safety and performance criteria. In February 2020, the FDA launched its voluntary pilot program: electronic Submission Template and Resource (eSTAR) as an interactive submission template that may be used by the medical device submitters to prepare certain pre-market submissions for a device. Starting in October 2023, all 510(k) submissions were required to be submitted using eSTAR unless exempted.

2) The premarket approval (“PMA”) process is more stringent, time-consuming, and expensive. A PMA application must be supported by valid scientific evidence, which typically entails collection of extensive technical, preclinical, clinical, and manufacturing data. Once the PMA is submitted and found to be complete, the FDA begins an in-depth review, which is required by statute to take no longer than 180 days. However, the process typically takes significantly longer and may require several years to complete.

Pursuant to the Medical Device User Fee Modernization Act (MDUFA), the FDA collects user fees for the review of devices for marketing clearance or approval. The current iteration of the Medical Device User Fee Act (MDUFA V) came into effect in October 2022. Under MDUFA V, the FDA is authorized to collect $1.8 billion in user fee revenue for the five-year cycle, an increase from the approximately $1 billion in user fees under MDUFA IV, between 2017 and 2022. A significant change from MDUFA IV to MDUFA V relates to performance goals for De Novo Classification requests (requests for novel medical devices for which general controls alone provide reasonable assurance of safety and effectiveness for the intended use). There has also been updated PMA guidance, with the FDA conducting substantive reviews within 90 calendar days for all original PMAs, panel-track supplements, and 180-Day supplements.

Regulatory Overview Outside the U.S.

The European Union (EU), along with countries such as Japan, Canada, and Australia all operate strict regulatory regimes similar to that of the FDA, and international consensus is moving towards more stringent regulations. Stricter regulations for new devices may slow-release dates and may negatively affect companies within the industry.

Medical device manufacturers face a single regulatory body across the EU: Regulation (EU 2017/745), also known as the European Union Medical Device Regulation (EU MDR). The regulation was published in 2017, replacing the medical device directives regulation that was in place since the 1990s. The requirements of the MDR became applicable to all medical devices sold in the EU in May 2021. The EU is the second largest market for medical devices in the world with total medical device sales expected to exceed approximately €170 billion by 2027, behind only the United States. The EU MDR has introduced stricter requirements for medical device manufacturers, including increased clinical evidence and post-market surveillance. Consequently, there is increased risk for longer approval processes and delays in manufacturing of these devices.

5. Emerging Global Markets

Emerging economies are claiming a growing share of global healthcare consumption, including medical devices and related procedures, owing to relative economic prosperity, growing medical awareness, and increasing (and increasingly aging) populations. According to the WHO, middle income countries, such as China, Turkey, and Peru, among others, are rapidly converging towards outsized levels of spending as their income scales. When countries grow richer, the demand for health care increases along with people’s expectation for government-funded healthcare. Upper-middle income countries accounted for 17.5% of total global healthcare spending in 2021, up from 10.5% in 2000.

As global health expenditure continues to increase, sales to countries outside the U.S. represent a potential avenue for growth for domestic medical device companies. According to the World Bank, all regions (except Sub-Saharan Africa and South Asia) have seen an increase in healthcare spending as a percentage of total output over the last two decades.

Global medical device sales are estimated to increase 6.3% annually from 2024 to 2032, reaching nearly $887 billion according to data from Fortune Business Insights. While the Americas are projected to remain the world’s largest medical device market, the Asia Pacific market is expected to expand at a relatively quicker pace over the next several years.

World Health Expenditure as a % of GDP

Summary

Demographic shifts underlie the long-term market opportunity for medical device manufacturers. While efforts to control costs on the part of the government insurer in the U.S. (and elsewhere) may limit future pricing growth for incumbent products, a growing global market provides domestic device manufacturers with an opportunity to broaden and diversify their geographic revenue base. Developing new products and procedures is risky and usually more resource intensive compared to some other growth sectors of the economy. However, barriers to entry in the form of existing regulations provide a measure of relief from competition, especially for newly developed products.

Post-Script – Outlook for the Balance of 2025

The medical device industry, if not the broader body politic, looked to have put the COVID-19 pandemic firmly behind it by 2024. COVID-era convulsions specific to the industry on the demand and supply sides included deferrals of many elective procedures in the early part of the pandemic and disruptions to the (global) supply chains involved in the manufacture of devices and equipment. Procedure volumes were largely caught up by a couple of years after the pandemic and most manufacturers appeared to have worked through their supply chain and inventory issues by 2024. Back to focusing on the more routine longer-term demographic and other trends?

Well, maybe not quite so fast. As we were updating this note in mid-April, a few new items came to light that many observers may not have been adequately attuned to even at the advent of the new year. First, the U.S. has embarked on a deliberate push to alter the global trade landscape. While the specifics of the new regime remain uncertain and in flux, it is fair to assume a higher degree of trade friction will affect device manufacturers that rely on global supply chains. And such friction will surely introduce costs, including welfare losses, that will be borne to varying degrees by everyone involved – component suppliers, manufacturers, caregivers, end-user patients, and entire nations.

Second, changes in the payor landscape in the U.S. could potentially materialize as the government appears ready to scrutinize Medicaid and Medicare programs. Again, the specifics of these changes (if any) are unknown and uncertain. However, following our discussion of the second trend in an earlier section of this article, it bears considering that any changes to these programs will percolate to care settings, potentially affecting everyone involved.

Finally, in this space we are fond of mentioning likely technological changes ahead of us. Last year, we highlighted GLP-1 drugs, and deservedly so even after the benefit of hindsight. At the current moment, artificial intelligence has come to dominate the zeitgeist. For medtech companies, AI has the potential to bring about technological change across a wide range of functions from product design/testing, to incorporation of large datasets (and analysis) to enhance procedure outcomes, to business process improvements. We will remain highly curious observers over both the short term and long term.

Stryker Corporation (SYK): Many Acquisitions in 2024

Stryker Corporation (NYSE:SYK) is a global leader in medical technologies, offering products and services in MedSurg, Neurotechnology, and Orthopedics that help improve patient and healthcare outcomes. Divestiture of the Spine division was completed in 2025. The company sells its products to physicians, hospitals, and other healthcare facilities through company-owned subsidiaries and branches, as well as third-party dealers and distributors in approximately 75 countries. The company is based in Portage, Michigan.

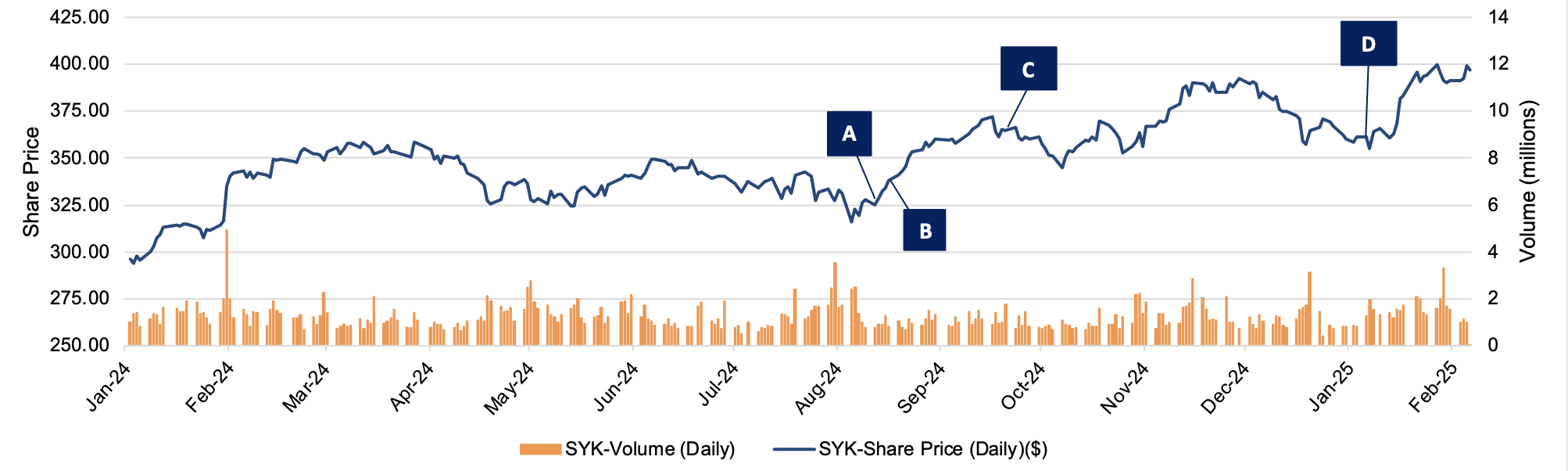

During 2024, Stryker Corporation completed a number of acquisitions, with a further announcement in early January 2025. Since the beginning of 2024, Stryker’s stock price has grown 34% from $296.23 per share to $397.17 per share in early February 2025. Stryker CEO Kevin Lobo highlighted the company’s recent acquisitions in October 2024, stating that he expects the acquisitions to contribute $300 million to its 2025 sales. Additionally, Mr. Lobo stated Stryker has “significant financial capacity for future deals,” having already spent $1.6 billion, and that “M&A will be the number one use of the company’s cash going forward.” On the following page, we summarize our observations related to some of Styker’s recent acquisitions and the company’s stock price at the relevant dates.

Click here to expand the image above

(A): Stryker Corporation agreed to acquire 100% of Webdr.ai Inc. (fka Care.ai inc.), a Florida-based company, on August 12, 2024. The acquisition was completed on September 17, 2024. The terms of the deal were undisclosed. On the day of announcement, Stryker’s stock price was $325.20 per share. Webdr.ai develops and operates a sensor-based platform that detects and evaluates a range of patient behaviors. Primarily, it offers an artificial intelligence (AI) powered platform that detects and predicts adverse events. The acquisition aims to strengthen Stryker’s growing healthcare IT offering and wirelessly connected medical device portfolio.

(B): Stryker Corporation agreed to acquire 100% of Vertos Medical Inc. (fka X-Sten Corp.), a California-based company, on August 22, 2024. The acquisition was completed on October 1, 2024. The terms of the deal were undisclosed. On the day of announcement, Stryker’s stock price was $350.72 per share. Vertos, a medical device company, develops minimally invasive treatments for lumbar spinal stenosis. The Vertos acquisition aims to strengthen Stryker’s Orthopedics and Spine segment.

(C): Stryker Corporation acquired 100% of NICO Corporation, an Indiana-based company, on September 20, 2024. The terms of the deal were undisclosed. Stryker’s stock price at the transaction date was $364.81 per share. NICO designs and develops technology and products for the field of corridor surgery, including cranial, ENT, spinal, and otolaryngology. It offers its products through distributors to physicians and hospitals in North America, the Middle East, Israel, Oceania, Australia, and New Zealand. The acquisition aims to complement Stryker’s Neurotechnology and Spine segments.

(D): Stryker Corporation agreed to acquire 100% of Inari Medical, Inc. (NASDAQGS:NARI), a California-based company, on January 6, 2025 for a total of $4.8 billion. Under the terms of the offer, Stryker will commence a tender offer for all outstanding shares of common stock for $80 per share in cash. On the day of announcement, Stryker’s stock price was $361.36 per share. Inari builds minimally invasive, novel, and catheter-based mechanical thrombectomy devices and accessories for specific diseases in the United States. This acquisition should be accretive to Stryker’s Neurovascular business, per management. Mr. Lobo noted “the acquisition of Inari expands Stryker’s portfolio to provide life-saving solutions to patients who suffer from peripheral vascular diseases.” At announcement, the deal’s enterprise value to total revenue multiple was 8.37x.

The Value of Carried Interest in Estate Planning: A Guide for Newly Formed Funds

As we stated in the March 2025 issue of Value Matters®, we believe that prudent federal estate and gift tax planning involves a lifetime horizon with adherence to best practices that yield optimal outcomes. When economic and market conditions present an opportunity for estate planning, assets with low current values and potential for significant appreciation should be considered for efficient estate planning. One type of asset that fits this category is carried interest.

This article explores the strategic incorporation of carried interests in estate planning, particularly for newly formed private equity funds. It discusses the benefits and complexities of leveraging such interests under current economic conditions and tax regulations to optimize estate outcomes. We will discuss specific valuation approaches and methods in the valuation of carried interests in a future article.

Carried Interest Explained

What is carried interest? It is the profits interest that a private equity, venture capital, or hedge fund principal receives if the fund exceeds certain performance benchmarks. Carried interest can also be referred to as performance allocation, incentive allocation, or promote interest in the case of real estate funds. Separately, fund principals also receive economics from management fees and direct investments in the fund.

Fund Structures and Carried Interest Allocation

A basic private equity fund structure is shown below. Typically, the fund principals form a general partner entity and a management company entity. The principals then raise capital from limited partners and make investments over the term of the fund. The management company receives management fees, often around 2% per year, for investment management services provided. The general partner entity typically invests alongside the limited partners and receives its pro-rata share of returns along with the limited partners. If the fund exceeds certain benchmarks, the general partner also receives carried interest, often around 20% of fund returns beyond the benchmark.

It is important to note that private equity fund structures come in many forms, from basic to complex. Even though this article is focused primarily on private equity funds, similar concepts apply to certain hedge funds and venture capital funds.

Navigating Uncertainty and Valuation Challenges

Uncertainty exists regarding the fund’s performance, more so earlier in the fund’s life. When the fund entities are formed, and before capital is raised, there is also uncertainty regarding how much capital will be raised. These uncertainties result in low values for the fund entities at inception. The fund entity values will appreciate significantly if the fund is able to successfully raise capital and achieve strong investment returns.